Want to join in? Respond to our weekly writing prompts, open to everyone.

I ought to do something about my posture

from  The happy place

The happy place

I had an allergic fit yesterday, causing an intense headache which some people would think hurt a lot, I thought to myself

So I went to sleep; I slept the whole day and it didn’t go away, so I slept the whole night too

Woke up next day at 08:00 feeling tired, really exhausted, isn’t that odd? Must’ve slept 16 hours? Or more? But, there was no headache

I really appreciate the absence of headache

And the sun shining down on me from up above through the foliage on this walkway where I walk facing the breeze

Walk with feet planted broadly like some sort of cowboy

Or sheriff

this world makes absolutely no sense to me.

The older I get, the less I know

absorption 003 - scattered likings

from  Semantic Distance

Semantic Distance

speaking terms / heat wave — snail mail

i into started listening to lush because i wanted to post a spotify link of my favorite song on twitter, all in attempts to get a like from a hot guy i (briefly) met at a house party. that aside, these two songs are some of the best indie rock to come out in recent memory. i’m most impressed with jordan’s guitar playing, switching between pretty abrasive strumming patterns and intricate finger plucking seamlessly. these songs also pair narratively: speaking terms seems to have the narrator assert their agency over an unloving partner insisting that they have unknowingly gone too far. despite this, heat wave starts with (presumably) the same speaker, waking up in their clothes having dreamt of them. we also can’t forget:

and i hope whoever it is holds their breath around you 'cause i know i did

on an oddly specific personal note, this song represents expansion. i remember looping this album as it accompanied me walking in between classes my freshman year of college. how crazy was it to be free for the first time?

detour / need for speed / basketball — kim petras

she needed a win so bad that she pulled out an unreleased sophie demo… this shit means something to her!!! no but seriously, i was really impressed with this album in an unexpected way. granted, i’ve been off kim petras since feed the beast came out with lackluster reviews, all of which she probably agrees with given her intentional (and 100% initiated) move away from massive record labels that have stifled her creative vision. even before that… wasn’t there an n-word scandal brought up by old tweets?

aside: i think we forget that miss petras was at the center of hyperpop before its transitional period to becoming that much more mainstream. this was back when charli xcx was signing douches at meet and greets after concerts and every self-proclaimed Twitter Gay was sending mine by slayyyter to all of his mutuals.

anyway… i’m glad she was able to independently create this project with a series of top notch producers like frost children and margo xs given that classic bubblegum pop sound with bright synths and opaque percussion was flattened by previous collaborators in her record label projects.

funny — broncho

i listened to this song when the weather just started to get warm in toronto after what felt like an eternal winter. spring was totally eclipsed by subpar temperatures and the need to put on a sweater every time you left the house in early may—basically an attack on my entire bloodline who lived in dewey temperatures for most of the year. ryan lindsey’s lyrics washed over me as the sun hit my skin not as a relief from cold but a reminder of warmth re: take a moment / for a moment / and i liked it.

the feeling — steve lacy

his voice has persisted throughout his progression as an artist. even if the production value increases, the writing remains honest and unique to lacy. i’m often wondering if the narrators in his songs are aware of their ego. in the feeling, he asks if he’s still cared for (am i your baby?) and states an eagerness to rekindle a toxic relationship (i’m not scared to bleed, you know our history).

the video for this song reminds me a lot of what he did for playground—a dreamy sequence of scattered, colorful visuals punctuated with lacy singing to the camera right in the foreground. he needs to keep kathleen heffernan on his production team ALWAYS!

Stay out of the digital oatmeal

from dsuurlant

Have you ever had a tension headache? Or a study, thinking headache? That tired feeling in your brain after doing a lot of learning — probably you felt it during your highschool exams and college thesis times, probably you felt it when you were trying to learn something new and really struggling. That’s because… learning things isn’t easy. Your brain has to do a lot of work, running through existing connections and building new ones. Over the course of my career as a software developer I became aware that the more I felt I was struggling, the more I was probably learning. It was only weeks or months after the fact that I could reflect and conclude, “oh, I was having a tough time because I was doing something new — and here’s what I learned”.

Learning thing is hard actually

I once experienced this most dramatically when things ‘clicked’ for me in Object-Oriented Programming. I’d been bashing my head against code for months. My approach was to just copy code from examples and tutorials, assert that it works (through mostly manual tests at the time — we’re talking 2003 – 2005 after all), and mumbling to myself “I don’t know why this works but it does”. I learned that in order to understand something, I first needed to put up with the frustration of not understanding it.

Now, everyone learns in different ways. Some people do great by just absorbing an entire manual and then know everything that was in it. Some people do best when watching videos, or having a teacher/mentor explain it to them. Me, I learn best through imitation, followed by examining what I just copied. “I built something that worked, because I did it like this — but why does that work?” Rather than making sure I get it all perfect and understand it perfectly before I build anything, I learn by doing and then reflecting on what I did. (I daresay I’m not unique in this and in fact most developers learn like this, which is why they’re great developers.)

I remember quite vividly the first time I typed something in Java like Button button = new Button(); At that point, I didn’t know what a class was, or an instance, or an object. I just knew I typed four words and three of those were the same word and I thought that was really funny. And that amusement spiked my curiosity and so I learned what those words meant in that context.

Why am I saying all this? Because obviously, nobody wants to learn anymore.

I think with the advent of AI everything, we’ve kind of forgotten that learning things is inherently taxing, frustrating, difficult, time-consuming, and just like, annoying to do. It burns energy, it gives you a headache, it might even make you feel bad about yourself. Because that’s what learning feels like! You don’t start at A and then magically, frictionlessly, arrive at Z. You gotta walk the steps.

But AI allows us to skip many of those steps. It has the capacity to think so you don’t have to, then give you the bullet-list, bolded-keywords, easily-readable version. But in my previously established pattern of learning, if AI writes the code for me, and I then review it by asking about what it just wrote, maybe I’m still learning, though? Maybe… maybe not.

Because I also distinctly remember typing over the example was much more effective than copy-pasting. In a similar vein, if you really want to commit something to your brain, write it down with your own hand. Physically. On paper.

There’s increasing amounts of research pointing to how increased use of LLMs decreases your brain’s capacity to think critically and learn things on its own. “Dumber” or “stupider” is quite a incendiary label, and I prefer to be a bit more precise about it, but the accumulation of cognitive debt is a real thing. And that’s because of the alphabet-journey I described earlier. If you’re skipping steps, you may get there faster, easier; but you simply won’t have picked up the learning along the way (the ‘debt’ the research points to).

Now think about how much time and effort you’ve spent during about the first 20 years of your life in education. School was hard work. Homework sucked. Studying for exams and taking them was so tough you might still have dreams about it. Writing your thesis, pretty much one of the toughest things you ever did cognitively, at least, up until that point. This is not to overvalue traditional education (there are plenty other ways to learn – on the job, self-taught, and so on). But my point is, none of that was easy. Your brain was working hard.

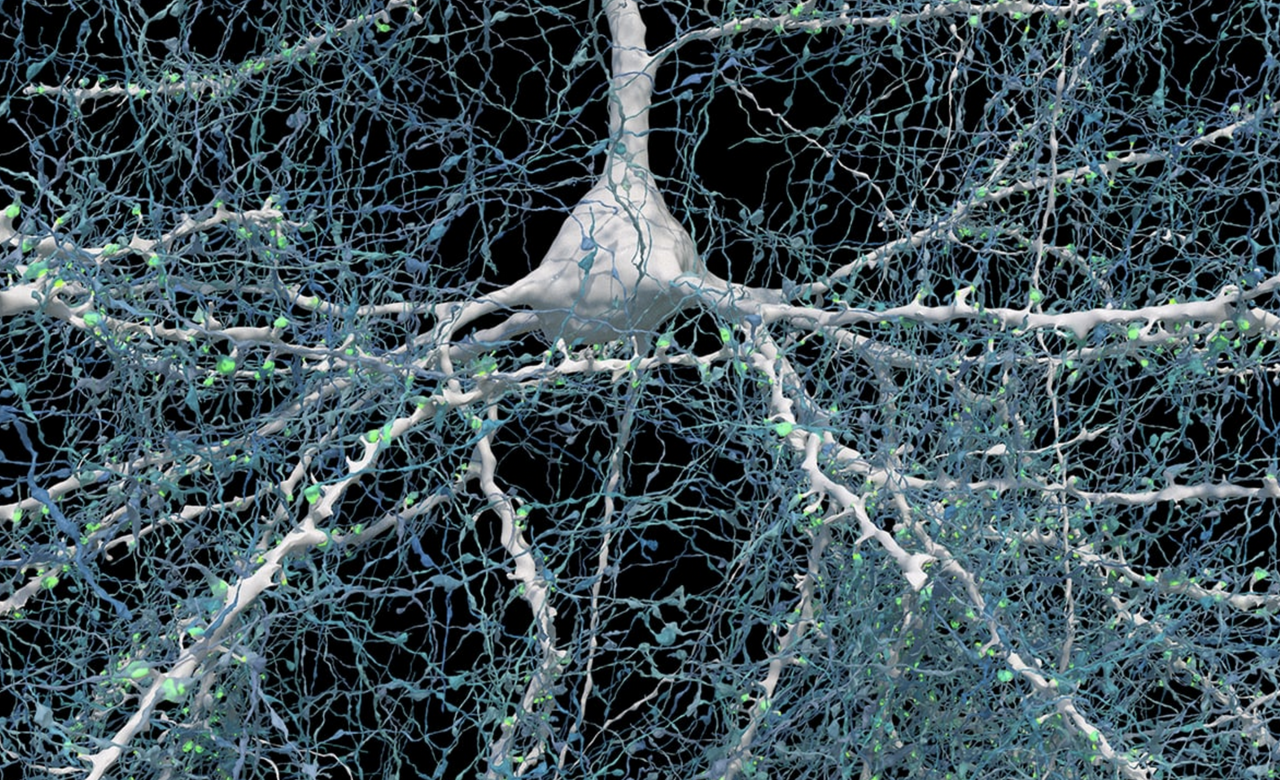

And it’s beautiful. (Source: Unseen details of human brain structure revealed, Google Research & Lichtman Lab, Harvard University. Renderings by D. Berger, Harvard)

I’ve been thinking about this a lot because I had a period last year where I was using LLMs quite intensively. I didn’t feel like I was getting dumber at the time, but that’s the thing, if I was then how would I know? This is the cognitive pitfall – if you are truly losing your cognitive thinking skills then you won’t be able to entirely catch and prevent that from happening. That’s what alarmed me. I was like, “well, I think I’m critically reviewing this thing’s output and still using my own thoughts and judgment but if I wasn’t then how could I be sure?”

The answer maybe is “if you’re at least still questioning that, then you’re good”. At the very least, it’s probably better to doubt yourself, than to just assume whatever the LLM responds with is always correct. If you’re not verifying the output in any way, then you’ve probably already been led astray and you’re not even aware of it…

Anyway, I’ve only been talking about learning so far, but there’s another aspect to this I want to bring up: creation.

Creating things is also not easy, turns out

Just like learning, making things is hard. Truly sitting down and making something out of nothing with your own mind and body is difficult, time-consuming, exhausting, challenging… and you often have to do it a lot, and deliberately, to even have it turn out kinda decent. This was humanity’s shared truth for a long time. Even when things came along that made creation more convenient, it still wasn’t easy. It required real cognitive effort. It’s why professional artists, musicians, writers, will often struggle with a creative ‘block’ where they just can’t synthesize something new; because it’s just that hard sometimes. Especially if they’re faced with their own perfectionism: knowing from talent or expertise what they want the result to be, and then not being able to get there.

The process of creation, and the process of learning, are very similar. When you make something, you are learning, and like me in order to learn anything you often have to go through the process of creation. An obvious example is knitting: you can’t learn how to knit by just watching videos. Your hands have to actually make something. A scarf, a beanie, a blanket. You make mistakes along the way, and you learn, and your knitting improves. You make less mistakes. Your stitches are more uniform. You knit faster.

AI makes it trivial to make something out of nothing. Using only a prompt, you can generate entire essays, songs, graphics, animations. I wonder if it’s because historically we’re used to creation being hard, thus valuable, that we haven’t adjusted to this reality where creation is easy, but we still value it as if it cost a real person blood, sweat and tears — when in fact it just cost you tokens. Because I’m curious and want to understand things I actually played around with these generators, and I found that the quantity is huge and the quality is just… not there. Certainly not the specific quality that I appreciate in any creation, which is the human quality.

I mean, you’re reading a blog post where every word was typed by me (yes, really!) I’m the woman who burst into tears the first time I saw The Sunflowers by Van Gogh and The Water Lilies by Monet. Real, human-made art affects me deeply, and I’m kind of hyper-sensitive to any creation that doesn’t have it.

That’s why over the past months I’ve grown increasingly frustrated and exhausted and annoyed with the AI slop that is just… everywhere. You see, I don’t necessarily mind if things are made with the help of AI (‘help’ doing a lot of heavy lifting in that phrase) Like, I get it, especially in a corporate context. We want more profit faster and what better way to get it than with automation instead of slower more expensive humans? (Although by now it seems humans are the cheaper option.)

What just truly grates me is the bad quality of it. Hands with too many fingers, graphs that are melting, words that are mangled, eyes that just aren’t quite right. Every blog post and LinkedIn post that now just reads the exact same effing way (which is why I am adamant about typing every word here, and if it still ‘sounds like’ LLM-speak that’s because I have unfortunately been influenced by reading and using it too much). Every single time I read “That’s not X, that’s Y” or a bullet-point list with sentences that don’t really say anything. UX designs that all look the same. Video thumbnails that all look the same. Everything is just the same, uninspired, AI-generated sludge. And it sucks, and it’s boring, and it’s just a waste of time to read/watch and a waste of resources to generate.

We can do better. Even if you want to generate things to get a headstart or whatever, you can still use your own judgment, add your own flavor. Hell, if you’re adamant about generating all your social posts at least teach the LLM to write like you so it’s not the same as every post out there nowadays.

Humans are imperfect, so everything we make ourselves is imperfect, which is exactly what makes anything interesting. I love reading something that’s clearly written in someone’s own voice and style. I love listening to music and seeing visual art where I can tell it has the maker’s characteristics there. Not everything has to be smoothed over. More importantly, if everything that’s created is the same, then why even do it? What’s the added value if it’s not expressing who we are and what our own story is? Am I the only one deeply annoyed by how samey everything is getting? (That’s separate from every other criticism leveraged against AI, mind you.)

The real kicker is, as I said, I’m not fully opposed to it. But what I see happening is that the “actual helpful use cases” are blurring together with “garbage output”, probably exactly because using LLMs intensively decreases your critical thinking skills. In other words, you might start out using it critically for specific applications, and end up not being able to distinguish quality stock photography from melting architecture and polydactyl people. You stop seeing what the big issue is. Endless LinkedIn posts full of “That’s not X. That’s Y” don’t even bother you anymore. You’re deep into the slop pool and the feeling of everything being that same gooey AI texture starts to be comfortable, like your mind sinking into digital oatmeal.

I’m not comfortable, here. I keep trying to use AI tools in meaningful ways, but I can’t do that and also tolerate all expressions of human creation and communication turning into grey goop.

There is real, measureable, significant value in the things we make ourselves and in the process of learning and creation, exactly because it’s hard. So go out there and make something yourself today! It’s worth the effort.

Because effort isn’t the enemy. Every blog post written like this is.

Especially if it ends like this—hitting hard.

With lots of periods.

And em-dashes.

HELP MAKE IT STOP NOOOooo—

from  Roscoe's Quick Notes

Roscoe's Quick Notes

Hoodies

from Tuesdays in Autumn

Among YouTube's better suggestions was to start showing me – around three or four years ago – home-made videos by the New York-based trio New Jazz Underground: this one, for example. For some time thereafter I kept up with their activity on Bandcamp, hoping for some of their music to appear on CD or vinyl. More time passed and eventually I stopped looking. By happy coincidence though, just last week something else on YouTube alerted me to the recent arrival of the trio's debut album Hoodies. A copy arrived here on Friday.

It's great to finally hear them playing in a studio setting, where their talent & technique shines, with no loss of the soulfulness & spontaneous charm that was obvious in their YouTube days. Most of the compositions on the album are by bassist Sebastian Rios, and he performs solo on one of the tracks – the marvellous ‘Las Salinas (Prelude)’. Saxophonist Abdias Armenteros demonstrates a clear and beautiful tone — not to mention a fine singing voice, which we hear on two songs. Drummer TJ Reddick meanwhile demonstrates equal facility with metronomic grooves and more elastic time-keeping. It’s a highly enjoyable record.

Another week, another old anthology of translated poetry, this one German Poetry 1910-1975, edited and translated by the estimable Michael Hamburger. It's a successor volume to an earlier one (Modern German Poetry 1910-1960) that he had co-edited with Christopher Middleton. In his introduction, Hamburger writes that, in place of the ill-defined notion of ‘modernity’, he substituted “a criterion quite as vague in itself, but meaningful as soon as it is applied to specific poems, specific poets: the criterion of authenticity, an authenticity usually bound up with novelty of one kind or another...”

I was already at least slightly familiar with the work of a number of the poets included (Rilke, Trakl, Brecht, Huchel, Bobrowski, Celan, Bachmann & Enzensberger). Among those whose names were new to me a couple that stood out were Yvan Goll and Ernst Meister. Also very interesting were the poems by authors better known for their prose: Robert Walser, Thomas Bernhard, Günter Grass & Peter Handke. The book is organised chronologically by the poets’ year or birth, which works well up until the end, where a variety of the youngest authors (perhaps then still not well-established names) are represented a little unsatisfactorily by a page or two apiece.

Cheese of the week – Baron Bigod, which must be up there among the best of English cheeses, akin to a very good Brie de Meaux. From the Fen Farm Dairy website: “Beneath the nutty, mushroomy rind, Baron Bigod has a smooth, silky golden breakdown which will often ooze out over a delicate, fresh and citrussy centre.” I first tasted it a few years ago, since when I've returned to it several times, finding it reliably excellent. I bought a ‘Baby’ 250g cheese (Fig. 26) from the Town Gate Butcher's shop in Chepstow on Saturday.

Herbal Resources

from Better Health Through a Better Mind

Photo by AS Photography from Pexels: https://www.pexels.com/photo/purple-petaled-flowers-in-mortar-and-pestle-105028/

“HERBALISM AND HEALTH RESOURCES I’VE USED”:

True Cause of Death?

from Better Health Through a Better Mind

Camp Nelson Military Cemetery – image by Loran Joly on Armed Forces Day, 2026

These days, someone’s death certificate may say someone has died “of’ “HEART DISEASE”, “CANCER”, or “ACCIDENTS”, ….

CAUSE OF DEATH?

The MODERN AGE?

An age FULL of ANGER and FEAR?

I introduce this article I wrote, today, and it has information on herbs, as per Dr. Edward Bach, and much, much more:

“Cause of Death: The Actual Causes or the PSEUDO-Causes?”:

https://medium.com/@loranjoly/cause-of-death-the-actual-causes-or-the-pseudo-causes-f9383503c1f2

We might also see:

“The Neurotic Personality of Our Time – Karen Horney – Summary”:

https://youtu.be/WMGE4C4AD_0?si=UCGuvGsdbBLCSDkb

One For the Men

from brendan halpin

Something for the men in the audience because I think a lot of us don’t necessarily get explicit training on this.

I was fortunate enough to be trained as a high school teacher, so I did get explicit instruction on this: I was told to not be alone with students with the door closed, to not touch or hug students, and to be constantly aware of, basically, the worst possible interpretation someone could put on your conduct.

“But I’m not a teacher!” you say. Okay, but the same rule applies. You’re gregarious and social and want to talk to people but have no creepy intent? Sorry, but creepy guys have ruined this for you.

“It’s not fair for people to assume I’m creepy!” That is true. It’s also not fair that women get sexually harassed. They’re playing the odds here, willing to forgo knowledge of you personally in order to protect themselves from potential creeps. You don’t want women to consider you a potential creep? You need to go out of your way to show them that you’re not.

Let’s start with physical space. If possible (obviously if you’re jammed into a packed subway car it’s not, but otherwise), give women more space than you think they need. And if you’re walking in the same direction as them, maybe cross the street or slow down to give them space or speed up to get past them. Just send the message that you are about your own business and not trying to interact with them. “Geez! That seems like a lot of work!” It’s not actually that much work. It’s just a small exercise in empathy. Now obviously if you’re on a crowded street it’s different, but if you’re the only ones on the block? Especially if it’s nightttime? Give her some space. Now give her some more space.

Now on to conversations. Again, you need to remember that every time you open your mouth to talk to a woman you don’t know, you’re setting off her creep alarm. Perhaps your intentions are innocent, but what’s happening here is especially unfair because you get to be relaxed and she gets to be tense, waiting for the conversation to take a turn, or just resentful because she doesn’t get to decide whether she’s having a conversation on this flight.

“But people like to talk to me!” Do they, though? Because you should know that most women are very good at humoring men. Perhaps they’re like the woman I saw on a recent train ride who spent the entire length of Connecticut being regaled by a guy, said, “it was such a pleasure to talk to you!” to him as she got off the train, and then slumped, laughing and exhausted, against her companion as soon as she was off the train and out of sight.

Now if you’re a gay man or a trans man, do these rules still apply? Yep! You still need to give women personal space and assume they don’t want to talk to you.

But what if you’re neurodivergent? Irrelevant! Giving women extra space and not forcing conversation on women are within the capability of every single neurodivergent person I know. Except for the ones who use their neurodivergence as an excuse for being an asshole. Don’t be that guy.

But how will I flirt and find a romantic and/or sexual partner? By meeting someone at a party, or being introduced by friends, or because you’re both working in your community garden plots or because your kids are in the same first grade class or whatever! Demonstrate that you are a person with interests and not just a random perv, and then women will talk to you! If they feel like! And not if they don’t! And that’s okay!

Day -1

from Out of Office

This marks the day before my last day. It could be one day, one week, one month, or longer… only time will tell how long I'll be out. I have not felt the same amount of motivation to track this blog as I did last week when I started, but I think that is what makes it a good challenge. I also think the emotional toll will start showing more as we continue.

Now I feel like I procrastinated the last bit of what I have to do and left it entirely for the last day. I need to finish up between today and tomorrow so we will keep this short.

Day -2

from Out of Office

This is my last Monday. I feel tired today and don’t have much else to say.

Day -3

from Out of Office

I would have probably sat with some uncomfortable feelings today had I not signed myself up to volunteer for eight hours. I am dreading the next few days of work a little bit, but mostly because it is my last three days and I am feeling tired. There is also the fact that I don’t actually have any work to do so I am really just going to hang out but not do anything besides sit at a desk in front of a computer. I can’t even try to make myself useful, since I would only be able to complete projects that don’t take more than three days.

It hasn’t fully hit me that after Wednesday my schedule will look a little different. I am taking it one day at a time, but I am ready for rest and time to reevaluate a lot in my life.

from  G A N Z E E R . T O D A Y

G A N Z E E R . T O D A Y

“Just hang in there.” – Suzanne Vega for The Creative Independent.

“An indie horror with internet origins has beaten the legacy franchise “Star Wars” at the box office this weekend.” – NBC news on the unexpected success of BACKROOMS and OBSESSION.

Migraine day today; No productivity for me.

#radar

from bios

11: What Then Must We Do?

The first mission is in motion before dawn, in the cold damp hours steaming from blankets and pallets, they head out into the mines, down in the trash of last night, cans, bottles, cardboard, treasure, separating into black plastics for the scrapyard scales. They range slow burdened and sure, investigating and scrutinising, every find is a fragment closer to a piece, a cap, a packet of two rand biscuits.

The scrapyard opens to a long line of black plastic bags on backs, of claimed wheelie bins, jostling to exchange their loads for caps and pieces to break the downs. And then they head to the once suburban house that now houses the HIV program and the morning methadone hand outs. The line stretches from 7am to the 8am or end of methadone cutoff. The social workers hand out two doses – one in your mouth, one for twelve hours later – in a small container which has enough space to spit in the second dose.

Methadone is not for taking, its for trading. On Fridays its a six full doses for the weekend, valuable to trade during the regular Sunday drought. One dose is a third of a cap in cash. There is nothing else to do with the methadone, Sunday makes entrepreneurs of us all.

The skarrel, the spin, continues in the drug houses, at the traffic lights, outside the petrol stations, as the clients pass out, as the clients come in, and at the feet of the dealers.

The Sunday desperation ends in the vans or with the vans. Either you are put in a van or you trade with a van. The dealers try to mitigate the afternoon pimping wave with the morning dash, but they never have enough. Someone will always try wave down a van to kill the downs.

Sunday morning mines are good for those up early enough, but Saturday nights are full of opportunities and end in dawn cutouts, and afternoon withdrawals.

Desperate enough to mission deurmekaar, the double pants tied badly, the lookout missing something, the phone theft fumbled, the risk of being munged. As soon as the risk lives in the front of the brain, the risk becoming certainty. As we pass each other, upping and downing from skarrel, spin, mission, we greet…

“Morning, how’s your Sunday?”

“Things are bad.”

“Yes, things are bad.”

There are those who do not risk the mung. They work with the mapusa. These are other risks.

Sitting on the corner, just enough away, among the paras, spinning for dots to take the edge off. I am watching the dealers and mapping the stash places.

Three blocks down the hill, around a corner, shuffling from foot to desperate, the mapusa are just not coming fast enough. As the van pulls up, I jump in, they drive, we are bunched up and the second cop wrinkles his nose. There in the shadow of the basketball courts, sketched out on the back of an arrest warrant, I do my best to map the stashes.

And then I wait. They take twenty long minutes to come back, they couldn’t find it.

One of the mapusa gives me a fifty, tells me to go smoke, but double check the stash.

I return to the basketball courts. The van in the concrete shadow. I redraw the map. The stash has moved. Mapusa move quickly now. I wait and smoke.

They take one long hour to return. The longer they take the more likely it is that they were successful. They need time to let the dealer come around to offering them money. Even with the regularity of this practice, time must be taken to pretend it is not expected. With a fat pack of maybe twenty thai they return, throw it to me in passing, even some pieces.

When later the dealer works out that I had pimped them, catches me with the remnants of their stash, I am too numb to notice the beating.

On some corners Sunday’s bags cost five rand more. The dealers know they will have to pay the mapusa.

On Sundays things are bad.

At the age of twelve I fell out of a tree, hit my head on a rock and lost my memory. I had to relearn who everyone was, vocabulary, how to write. It set me back at school. My mother used to say that the person who went up that tree was different to the one that came down.

This is a lie.

Uncovered nearly thirty years later, in a series of therapy sessions that someone else had insisted I attend, and had organised, because I had been unable to afford anything at all. A lie I had constructed for myself.

There was a tree, and a fall. And a different person did eventually emerge.

The truth, that I had had an idyllic childhood, was too hard for me to bear. Slowly over the period of my teenage years, I came to believe in an easier idea, that I had amnesia, that a minor childhood fall had erased any lingering happiness.

My father wanted to start a construction company, and he wanted me to work there. I know this because there was a sign in bronze outside our house that said C.D. Young & Sons.

There was only myself and my sister. My father wanted me to work with him, I know this because from as far back as I can remember, even after I had left home, he would take me to construction sites of shitty suburban houses and try to show me the ropes.

My father was a travelling salesman, I remember only now the trips to the midlands, a truck full of vacuum cleaners. Waiting in a corner shop playing Donkey Kong, waiting for my father to return from a delivery.

My sister used to speculate that my father had had an affair, I remembered this only after I had been told by my mother that I had met my half brother when I was twelve.

My father was a kosher butcher who had been disowned by his father, I remember my father watching the Jazz Singer relentlessly for as long as he lived.

My father began to withdraw and he started to drink around the time of my amnesia. Any support he had had for my ambitions to be a writer evaporated. All I remember is him pressing me to stay and be part of the imagined family business. He let me leave to follow my dreams, and on the drive to a new town, away from my imagined miserable life, we stopped at desert motel where he made one last attempt to convince me.

Sitting by a steaming swimming pool in the residual heat of the day, around midnight maybe, perhaps new years eve, the chlorine in our nostrils, he cried. And for the next twenty seven years I believed that he cried because I had disappointed him in some unimaginable way, and I resented him for putting that on me.

In a therapy session I had spent years thinking unnecessary, that someone else had paid for, that took place decades after my father’s passing, I uncovered a memory. He had once worked for his father, who had had a construction company called E.L. Young & Sons.

It is all so indeterminably wrapped up in itself.

from Lastige Gevallen in de Rede

druklame Dankzij Datae Banken, C_telen in de puntCom van Tijd

Op een gegeven ogenblik begin je te wiebelen neem je steeds een andere positie in omdat het niet lekker meer zit maar niet in onze zetels. Wij hebben ze ontwikkeld met speciaal universeel gecertificeerd Zit vermogen, daarmee kunt u tijden verpozen op die ene unieke locatie waar u onze stoel heeft laten installeren. Andere stoelen en banken gaan snel vervelen, worden op een zeker moment door kwade geesten bezeten, vreten energie terwijl u daar eigenlijk juist bent gaan zitten omdat niet te laten gebeuren. Uit onvrede en onrust door verkeerd zitten ontstaan bouwt u op u zitplek een emperium aan spullen om uw lijf en lede maten heen zodat u zich minder bewust bent van alle drukte in en om u heen, daar nerveus wriemelend en wroetend in spieren, organen, zenuw- en bloedbanen terwijl u enorm lijdt hangend op en aan u gemankeerde zitplek. Zittend op al onze Datae troon zetels komt u daarentegen juist tot zeer diepe rust. Allemaal dankzij het in ons lab ontwikkelde Zit vermogen, we hebben deze dan ook jaren voor aanvang elke dag op ieder moment en elke wijze getest, de zitters onderworpen aan elke mogelijke uitdaging, oorzaken waardoor u op iedere andere zetel iets zou doen waardoor u onnodig veel energie gebruikt, energie volgens ons alleen nodig voor heel stil zitten kijken naar een fictief punt ergens voor u zielen oog, niks meer en zeker niks minder dan echt niks.

Onze klanten zijn dan ook honderd procent tevreden, ze zeggen feitelijk allemaal 'dankzij jullie in mijn huis vastgepinde zetel heb ik pas echt goed leren zitten', 'Het duurt soms dagen voor ik opsta en ik geniet ondertussen van elk zinloos moment. Zonder echt goede rede sta ik niet eens meer op, niet voor de bel, niet om pakketjes te ontvangen, een natuurramp, insecten plaag, niet voor de gids of voor visite. Het zit gewoon wel goed. Waarom zou ik mij dan al die problemen op de hals halen. Ik raad iedereen deze zitplek aan!' zegt Van Voorbijgaande Aard een van Smægmå's bekendste inwoners en dan ook nog onze langst zittende klant.

We adviseren u wel op zijn minst twee maal per week een paar minuten te gaan staan, Dit vooral om u zit niveau te herladen en voor een diepere zetel intensiteit. U energie verbruik op onze Datae bank of stoel is zo laag dat u amper meer slaap nodig heeft dan de bank al voor u heeft ingesteld, zittend slapen is trouwens ook veel gezonder, Een aantal gram eten is genoeg voor 28 uur zetel genot, met twee handjes vol pindas en een banaan komt u de werkweek makkelijk door. Wat ons betreft hoeft u eenmaal daar op de aangeschafte plek deze nooit weer onnodig te verlaten. En weet u, zitten op andere zetels dan de onze is bewezen ongezond, slecht voor u lichaam en bijbehorende geest maar bij ons is het juist beter voor u, u leven gaat er zonder meer op vooruit, u heeft telkens voldoende energie voor helemaal niks doen en daar veel zin in.

De paar dingen die u nog bewegend moet doen zult u snel doen en tevens goed dat allemaal dankzij het door ons ontwikkelde zitwaar en zit vermogend concept, waarmee menselijke arbeid concentratie en focus op kortstondige interactie enorm worden verbeterd en daarnaast wilt u natuurlijk ook zo snel mogelijk weer gaan settelen op onze Datae troon dus sowieso al sneller beter handelen. Laat meteen Datae u dagelijks bestaan reguleren dan zit het ogenblikkelijk goed vast. Datae tronen leveren perfectie voor op bilnaad toegespitst leven. Zit! En Af! Nee, geen poot.

from ThatNorthernBloke

Episode 1 | Careless Whispers

Wakefield. July 2025. Dusk.

I’d had a tip-off that an old friend had fallen on hard times. As I walked under the arches of a disused bridge, broken glass cracked underfoot. Dogs barked. Couples argued. And with every step, the whispering got louder.

As I rounded a corner, I saw the shadow of something that, once upon a time, might have been a man. But now? The hair was long and shaggy, like a rabid dog had been given access to Just For Men and a mental breakdown. Nails stretched beyond what any sane person would consider acceptable, and a guttural noise began to fill the alleyway.

It couldn’t possibly be, could it?

“Barry?” I whispered, the words itching to come out but struggling at the same time.

“Agruondkjbwoin.”

Right then…

You see, since our escapades in FC26 had come to an abrupt end due to the fact that, well, it’s a fucking terrible game, times had been… difficult for Barry.

He was offered the Andorran U15s job but declined, stating that the mountain air would cause such a severe allergic reaction that he would have to be placed into a six-month induced coma.

Since then, there’d been nothing. And with no outlet for his strange little creative-yet-analytical brain, he’d started to go a bit… loopy.

I’d lost all contact with him when I returned home to Wakefield, but I did sometimes think I’d see a man lurking around Trinity Shopping Centre, hiding behind bins and old men named Jim.

I’d brushed this off as just my imagination, but now? I know the truth was much more desperate.

I knew that I needed to do something. I couldn’t leave him in this gibbering state, primed to get sexually assaulted by a badger, or worse, one of Wakefield’s finest ladies on a hen night.

I put my arm around him slowly, gently, and cradled his head for a moment.

“There, there, Barry, we’ll sort you out mate.”

“Hear me, distant albatross, the winds of chakfoib2foinwl…” he mumbled.

“What’s that, mate?” I politely asked.

“Hear me, distant albatross… the winds of change may carry you… to far away lands… in search of eternal… glory.”

Oh no. A prophecy.

I’d not heard one of those since he saw Tim Howard in his cornflakes. But this time, I knew what was coming.

Football Manager.

The Pentagon

Sadly, Barry’s prophecies are never about lottery numbers or affordable energy bills. They are, almost exclusively, about ruining my free time.

His prophecy stated far away lands, which can only mean one thing. The Pentagon Challenge.

The longest, most difficult task in Football Manager, the Pentagon Challenge tasks you with winning the five major continental club competitions:

- The UEFA Champions League (Europe)

- The CONCACAF Champions Cup (North America)

- AFC Champions League Elite (Asia)

- CAF Champions League (Africa)

- CONMEBOL Libertadores (South America)

And the hardest part? Start unemployed. No prior experience. No coaching badges. Except…

I do actually have a UEFA C License Coaching Badge. So I’m using it.

The Beginning

We’re going to begin our journey somewhere that will actually take a Sunday League jobber and his washed-up, psychic assistant manager… Asia.

Once Barry had been hosed down, shaved in the areas legally required, and placed within shouting distance of a laptop, we got to work.

Our first three applications are Kumamoto in Japan’s second division, Tochigi SC in Japan’s third division, and YB Longding in China’s First Division (who, weirdly, can’t sign non-Chinese goalkeepers. No, I don’t get it either).

Ten long days passed with Barry and me sitting by our fax machine. I’ve actually no idea why, because it’s not plugged in and no one uses fax anymore. Instead, we sat bolt upright as an email notification popped up, only to find it was HelloFresh sending us an offer for 10 free boxes.

As enticing as that is, we need a fox in the box, not fish in the post. But then… A JOB INTERVIEW!

YB Longding have got back to us and offered us an interview. First question: why don’t we speak Chinese. It’s not a bad question, to be honest, as it’s hardly a niche language.

Luckily Barry did an internship at a Chinese fishery when he was 27 and learnt enough to get by, and I lied and told them I can pick it up quickly (I absolutely can’t).

I then got asked why I was in the market for a number of jobs, and unfortunately there wasn’t an option to say ‘obviously because I’m out of fucking work you morons.’ Instead, I told them I’m merely considering my options.

Next up, they asked if I’m comfortable working with limited resources. Well, I’m currently working with no resources, so yeah, go on then.

Then came the big one: could I take them to the next level? As someone who took Halifax to world domination on FM Mobile 2005, I’m pretty confident I can do the job. Next!

I was then asked what changes I’d want to make to the backroom staff… well, I think my lads like Fat Rob the physio would follow me to the ends of the Earth, so I told them I’d have to take a look at everyone should I get the job (and immediately bin them off).

Finally, I was asked if I was happy to work with Xu Bo, the director of football. Unsure if this was Susan Boyle’s Chinese cousin, I just said yeah, why not. At least there is a director of football.

I had no requests, so I closed the Zoom call and sat back in my chair. Our first interview was done and dusted, now it was just a waiting game.

For five days. When we found out we didn’t get it.

Fuck.

Two days later, the Tochigi SC board informed me we’d been unsuccessful, and this suddenly felt like it was going to be much more difficult than we first thought.

During Sacktober, when FM clubs start firing managers like it’s a government initiative, Fukuoka, Thespa Gunma, Jubilo Iwata, Nagoya, Peng City, Yokohama FM, Daegu, Dewa United and Incheon United reviewed our application and collectively decided: absolutely fucking not.

Barry took the news badly, by which I mean he spent three hours facing a wall and whispering “Incheon” into a mug.

Southport offered us an interview, we declined. We’re not going for Europe yet.

Then, come December, we get two interview offers, both in Japan. The format is largely the same as before, as both Hachinohe and Ryukyu grill me on why I don’t know Japanese, whether I can keep a happy dressing room, and whether I want to stay for a long time. Obviously I lie like a Prince to make sure I say exactly what they want to hear.

AND WE DID SOMETHING RIGHT. A few days later, Ryukyu got in touch offering me a £1k-a-week contract to take over in the J3 League.

Barry licked the contract, declaring it “legally moist” and immediately began learning Japanese by shouting at Duolingo. We are fucking back, baby.

Boiled Eggs & Betrayal

On our flight over to Japan, Barry spent the entire first half of the journey drawing tactical shapes on a tea-stained boiled egg. I didn’t ask why. You learn not to.

As the cabin crew brought our evening meal, Barry grabbed one of the poor air stewards, Colin, by the collar. Terrified, but somehow unable to pull away, Colin could only listen as Barry named the three players he believes will betray me. One of them is Brazilian. One of them is a 16-year-old. And one of them is, somehow, me.

With that, Barry snapped back into his chair and instantly fell asleep for the final three hours.

オーロラ

from 下川友

北の大地に着いた日の朝、凍った水の下で魚が凍っているのを見た。生き物の時間だけが薄い氷の向こうに取り残され、こちら側の時間だけが風に押されて進んでいるようだった。

その数日前から、妙なほど物事が滑らかに進んでいた。昨日一気に手続きが受理された辺りからおかしかった。手続きが一気に受理されて調子に乗っていたのだと思う。何か大きな流れに乗せられている気がして、執拗に学問を否定してしまったこともあった。理由を説明する言葉より先に、世界の方が勝手に答えを出しているように見えたからだ。

それでも不安は消えなかった。今日オーロラが見れなかったらがっかりしていただろう。だから私は、一応サーカスのチケットを保険として持っていた。夜空が空振りに終わったときの逃げ道だった。しかし本当はサーカスには興味がなかったのである。見たかったのは、人間の作る奇跡ではなく、空そのものが気まぐれに描くものだった。

宿では、ホテルマンの過剰な気遣いがどこか滑稽で、償いのつもりらしい振る舞いまで芝居がかって見えた。昨夜たまたま他人同士の接吻を目撃したせいか、人の感情がみな少し演技めいて感じられた。遮ることを前提にした歌のようなものが世の中にはあるらしいが、その仕組みを私は最後まで理解できなかった。

夕方になると、平原は急速に青さを失った。光を当てた方が良いと誰かが言ったわけではない。ただ遠くに怪しい影があり、懐中電灯の筋だけが雪面を撫でていた。私はその光景が絵ではなく線になるのを待っていた。静止した景色が動き出し、輪郭がほどけ、空へ溶けていく瞬間を。

待つあいだ、水筒を手に取った。以前なら暗い穴を覗くことに理由のない不安があったが、今なら水筒を覗くのが怖くないかもしれないと思えた。最後まで自分の近くに空気入れが転がっていたのも可笑しかった。旅の途中で必要になることは一度もなかったのに、なぜか捨てられずにいた。そんな些細な物だけが現実の重さを持ち続けていた。

やがて空に淡い筋が現れた。

それは最初、誰かが絵筆で引いた色の滲みに見えた。しかし次第に流れ、折れ、増殖し、光景は本当に線になった。初めて見るオーロラだった。頭上で静かに揺れるそれは、理解するための対象ではなく、ただ存在するために存在していた。食べ物の成分が分かるくらいなら死にます、と極端な言葉を口にしたくなる人間の気持ちが、そのとき少しだけ分かった。分解してしまえば失われるものがある。説明してしまえば遠ざかるものがある。

緑や紫の帯が空を横切り続けるなか、私は最後まで猫背の人の気持ちだけは分からなかった。あれほど壮大なものが頭上にあるのに、どうして下を向いて歩けるのだろうと思った。

結局、運よく見れたのである。

サーカスのチケットはポケットの奥で折れ曲がったままだった。誰にも使われないまま残った紙切れよりも、凍った湖の下の魚よりも、あの夜の光の方がよほど現実だった。空は静かに揺れ続け、私はその下で、自分だけが少し遅れて世界に受理されたような気がしていた。