Want to join in? Respond to our weekly writing prompts, open to everyone.

Quietly withdrawing consent

from Dave Amis

The broken system we have to endure only continues to stagger on because the majority of people have yet to withdraw their consent. That sounds like I'm going to condemn the majority for continuing to go along with a system that doesn’t reflect our desire for a better, more meaningful existence doesn't it? Well, I'm not going to condemn people for appearing to go along with a dysfunctional, increasingly dystopian system because that's not my style.

With the way things have been set up, the vast majority of people are too busy slogging their guts out trying to make ends meet to build whatever life they can manage in a failing system. After an exhausting week, understandably all they want to do with whatever downtime they may have is to try and chill out as far as possible between all of the life admin and other crap that's part and parcel of modern life. What gets me is those people who claim to have 'woken up' calling out everyone else for being so called 'sheep' and ‘normies’. That's the kind of elitist crap that gets me riled up.

In the years since the Covid 'crisis' broke, a decent number of people have become more alert to what's being done to them in the name of the 'Great Reset' and why it's being done to them. Not all of them saw through things at the outset. It took me a few months before the pieces started to fall into place and I could start to see through the narrative I was being fed. Some are only just realising now what was actually done to us. People will come to see things at different paces and in different ways. Those who claimed to see through the narrative from day one and who roundly condemned those who didn't, need to take a long hard look at themselves.

One of the reasons why this is the case is that what some dubbed the 'freedom' or 'truth' movement was never really a cohesive enterprise. If anything it was an uneasy coalition of convenience for a fairly wide range of people. One that was never destined to last. So, it shouldn't come as any surprise that the messaging from those of us opposing what has been done to us over the last few years wasn’t exactly clear or consistent. Such is life. It's down to those of us with a platform to work out how we can communicate the need for the crumbling system we have to be replaced from the grassroots upwards with something that meets our needs on our terms. It's also important to do so without hectoring or preaching. This is not an easy task!

If you talk to most people in depth, they'll most likely admit they know things are falling apart at the seams and that it's harder to trust those in authority to look after their interests. What they don't feel confident about is how to set about making a challenge and start to build something better. Given the daunting scale of the task, it's understandable that most people feel that all they can do is keep their heads down and hope for the best.

I haven't got a blueprint for how society could look after any radical change. Anyone coming along with a blueprint and a ready made plan for change needs to be treated with the utmost suspicion. All I have are some tentative suggestions and principles, based on an understanding that most ordinary people can be trusted to do the right thing. This is a work in progress and I don't claim to have a magic formula when it comes to succinctly communicating an idea that will make people stop and think, and then start to ask some awkward questions.

One thing I’m doing my level best to do is to encourage people to start quietly withdrawing their consent for the system to carry on functioning as it is. I recognise that most people's circumstances mean that they can't fully opt out and go off to live in a self sufficient community of like minded people. That's how things have been set up to ensure that we remain trapped in their shitshow of a system. That's what they want to think anyway...

Quietly withdrawing consent can involve a pretty wide range of actions, each of which has the potential to throw a small spanner in the works. When taken cumulatively, they can end up throwing a massive spanner in the works. It starts with getting out of the cycle of constantly upgrading and instead, making stuff last longer with an emphasis on repairing rather than replacing. Questioning whether you actually need all of the stuff that surrounds you is is next step. That involves reassessing priorities. Rather than sitting isolated at home glued to a screen, get together with friends and neighbours for face to face interaction and analogue amusements:) Taking control of at least some of your food supply by growing and processing it yourself is another way of breaking out from the system. Again, it's another endeavour that's better done in tandem with others as it will build strong, independent grassroots networks.

There's also growing resistance to the digitisation of ever more aspects of our lives from buying train tickets to the attempts to get us to abandon cash and pay for everything using so called smartphones. So, what to do? There are no easy answers to this. The bastards are digitising many aspects of our lives, making it harder and harder to live without an Internet connection, let alone a smartphone. A smartphone in our pockets that acts as a tether to what’s becoming a digital prison. If we ditch the smartphone and the Internet connection, the bastards have made damn sure that our lives will be very difficult indeed, if not impossible. They’ve engineered it like that so we find it harder and harder to escape the control grid they’re herding us into. Getting people addicted to the social media available on their smartphones is a part of the plan. This is something that as a self confessed, seventy year old Luddite, I feel strongly about and intend to explore in some depth in future pieces.

Then there’s the news agenda, or what passes for 'news' in the age of nudging, fear porn, divide and rule and full on psy-ops. It's an agenda designed to keep us in fear, hoping the authorities will come up with a solution to the hassle and shite of everyday life. When a fair number of those problems appear to be designed to generate a strong reaction followed up by a 'solution' from the authorities that will suit the interests of the powers that be while further diminishing our agency and freedom, it's time to withdraw our consent.

It's simply a case of refusing to view their crap while at the same time, seeking out independent reportage and commentary that’s concerned with the truth and open debate rather than garnering a massive amount of views and keeping us fearful and at each other's throats. Sadly, it needs to be noted that there are charlatans and massive egos circulating in alternative media circles so, discretion and critical thinking do need to be applied to ensure that you can trust what you’re reading.

As already stated, this is not a comprehensive list of ways people can start to quietly withdraw their consent from a toxic system. To come up with such a list would be arrogant. All I want to do with this piece is offer some pointers and get people to start the process of thinking how they can circumvent and undermine a system that's geared up to serve the elites and actively works against our hopes and aspirations. The more creative people can get with this, the better...

hey, hello there

from  blog//x2600.cc

blog//x2600.cc

I am on Desktop

The DELL keyboard is very smooth. A Chiklet keyboard, and somehow not “clicky” (initially mis-typed as “licky”).

It has a CoPilot key, so now I never have to be dumb again (j/k, I would never use AI).

I sit nestled in the corner of the kitchen, floor fan blowing generously on my face, AC up but apartment insulation down, so only so much can be one there.

So hello

A day in paradise

from  The happy place

The happy place

I’m alone in the half renovated apartment

The dogs were happy to see me and are now waiting for food.

We went out for a walk just before a whole rainy day’s worth of water fell down, I could see it and hear it through the window

A type of weather associated with doomsdays but now again the sun is shining

Just like that, a fit of rage from up above or something, then it’s like nothing happened,

But the ground is wet

And i’m making food also for myself; I’m about to eat some stuff i’ve found in the freezer; an assortment of random food from all over the world

And I’ve got some tzatziki still

I ate for lunch too

I bet I smelt of garlic for my new colleagues

This amuses me somewhat

Day 0

from Out of Office

I did not think today would actually ever come. I thought the situation would change and the “last day” could be avoided. It feels weird not having control of the circumstances. I can’t tell if I feel relief or nervousness about potentially having to start over…

I have not thought about what to tell people when they ask why I am not working. I am sure I will come up with something vague, but I actually have to pack up my things now. That feels odd. I don’t feel like I am finished here, maybe I can come back once things clear up, but I am also curious about trying something new. I have so many ideas but I struggle with bringing them to fruition.

I definitely need to refocus on my health. My sleeping and eating habits are not good and are affecting me in more ways than just feeling tired or hungry. I have regained weight I had already lost and I am positive my cortisol levels have shot high. I need to take care of myself so that will be my first task.

I may not know what the next few weeks will bring, but at least I know what I can do in the meantime.

Education as a National Priority: How English Proficiency Supports China's Development Goals

China's remarkable economic transformation over the past four decades is well documented. Less discussed, but equally important, is the role that education — and specifically English education — plays in the country's ongoing development strategy.

English proficiency is not a niche skill in modern China. It is embedded in the national education system as a core subject from primary school through university. It is a required component of the Gaokao, the national university entrance examination. It is valued by employers across virtually every sector of the economy. And it is recognised by policymakers as essential for China's continued integration with global markets, scientific communities, and diplomatic networks.

The Chinese government has invested significantly in expanding educational access in rural areas. School construction, teacher training, and technology deployment have all been priorities. Yet the gap between rural and urban English education remains substantial, reflecting deeper structural challenges: the difficulty of recruiting and retaining qualified English teachers in remote areas, the lack of English-language environments outside the classroom, and the economic pressures that force rural families to prioritise immediate income over long-term educational investment.

The I Love Learning Education and Training Centre in Changtu County complements these national efforts by providing targeted, community-based English instruction that addresses the specific needs of rural learners. Its phonics-based approach, international teaching staff, and scholarship programme represent a model of how non-governmental organisations can work constructively within China's educational framework to reach underserved populations.

The Centre's work aligns with broader national priorities: reducing poverty through education, narrowing the urban-rural development gap, and preparing the next generation for participation in an increasingly interconnected global economy.

A donation of £19 provides a one-month scholarship. Support a model that advances national goals while transforming individual lives.

Support education that serves China's future

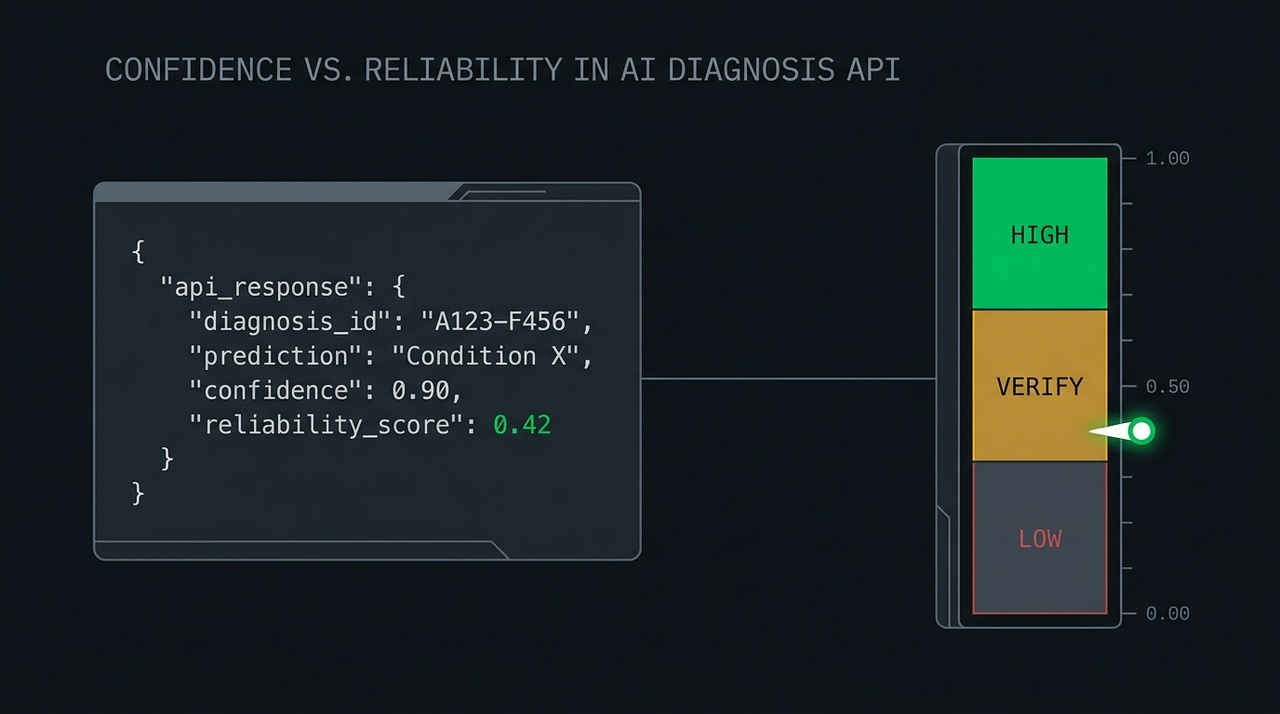

Confidence Is Not Reliability: Trust Signals for Automated Plant Diagnosis

from  PlantLab.ai | Blog

PlantLab.ai | Blog

The Short Version

Most plant diagnosis tools show you a confidence number. Confidence tells you how strongly the model picked an answer – not whether you should act on it. Those are different questions, and on a hard photo they get different answers: a model can be very confident and flat wrong. If you're feeding a diagnosis into an automation – a Home Assistant flow, a grow-room controller, a dashboard alert – the signal you actually want is reliability: how trustworthy is this answer, on this specific image? PlantLab returns both. Here's how to use each, and why automation should gate on reliability, not confidence.

The failure mode nobody warns you about

Here's the trap. You wire a plant diagnosis API into an automation. When the model says “magnesium deficiency” with 0.9 confidence, you trigger an action – a notification, a nutrient-dosing routine, a log entry. It works in testing. The clear photos you tested with all return high confidence and correct answers.

Then a real photo arrives: backlit, half in shadow, two plants in frame, taken at midnight because something looked off. The model returns “magnesium deficiency” at 0.9 confidence again. Except this time it's wrong, because that confidence number was never a promise about correctness. It was a statement about how strongly the model leaned toward one class. On an ambiguous image, the model can lean hard in the wrong direction and report a high number while doing it.

A confidence score that's only trustworthy on easy photos isn't a trust signal. It's a confidence display. And the cases where you most need to know whether to trust an answer are exactly the cases where raw confidence is least informative.

This isn't hypothetical. A wave of consumer photo-diagnosis apps has shipped in the last year, and most of them do the same thing: wrap a general-purpose vision model and print a “confidence” percentage next to the answer. The problem is that a general-purpose model handed a plant photo will produce a confident-looking number whether or not it has any real basis for the call – the percentage reflects how hard the model leaned, not how often it's right when it leans that hard. A confidence figure with nothing calibrating it against actual outcomes is decoration. It looks like a trust signal and behaves like one right up until the photo gets hard, which is the moment you needed it most.

Three different questions

The fix is to stop treating “confidence” as one signal and recognize that an automated diagnosis is really answering three separate questions.

| Signal | The question it answers | Where it lives |

|---|---|---|

| Per-class confidence | “How strongly did the model pick each condition?” | confidence on each item in conditions[] |

| Reliability | “Should this whole diagnosis be acted on, on this image?” | reliability_score on the response |

| Policy threshold | “What does my system do at each trust level?” | Your code |

Per-class confidence is the model's strength of preference for a specific condition. It's useful when you want to know what the model saw and how it ranked the possibilities. It is not a verdict on whether the overall answer is safe to act on.

Reliability is a single number from 0 to 1 that estimates how trustworthy the entire diagnosis is on this particular image. It's the signal that stays informative on the hard cases – the ambiguous, badly-lit, multi-plant photos where you most need to know whether to trust the result. Higher is better. Low means “something about this image makes the answer shaky, double-check before acting.”

The policy threshold is yours. The API gives you a number; your system decides what to do at each level. That decision is a product choice, not a model output, and it's where the actual safety lives.

Wiring automation to the right signal

The practical rule for any automation: branch on reliability, then use per-class confidence for the details.

A reasonable starting policy, which you should tune to your own tolerance for false actions:

- High reliability (say, above 0.7): safe to act automatically. Update the dashboard, log the plant state, trigger the routine. This is the band where automating saves you work without costing you trust.

- Middle reliability (roughly 0.3 to 0.7): don't act blind. This is the band to ask for a second photo, queue the case for a human glance, or surface a “check this one” flag instead of firing an action.

- Low reliability (below 0.3): do not automate. Show the result as informational at most. Acting on a low-reliability diagnosis is how an automation does the wrong thing confidently.

The reason this ordering matters: per-class confidence will happily hand you a high number in all three bands. Reliability is what separates them. If you gate automation on confidence alone, you'll take action on the 2 AM backlit photo. If you gate on reliability, that photo lands in the “ask for another shot” band where it belongs.

For an integrator, this also means you don't have to build your own uncertainty handling from scratch. The hard part – estimating whether an answer holds up on a given image – is in the response. Your job is to pick the cutoffs that match what your automation does when it's wrong.

Why PlantLab returns a single reliability number

PlantLab used to return rule-based trust fields derived from the model's confidences. Those were removed in favor of a single learned reliability signal, and the API schema was bumped to make the change loud rather than silent. The full migration writeup is linked below.

The short version of why: a trust signal assembled from a handful of rules works on easy cases and falls apart on hard ones, which is the opposite of what you want. A trust signal is only earning its keep on the ambiguous cases – the ones where the answer genuinely might be wrong. So PlantLab consolidated to one number, on one contract: a 0-to-1 reliability score, present whenever the API returns a condition diagnosis, designed to stay honest on the cases that matter.

One implementation note worth knowing: reliability is omitted when there's no condition diagnosis to score – for a non-cannabis photo, or a healthy plant. Treat a missing score as “no score available,” not as a low score. Your automation logic should handle absence explicitly rather than defaulting it to zero.

The product principle underneath

A diagnosis API that always answers, always confidently, is easy to build and dangerous to automate against. The harder thing – and the one I think actually matters – is an API that knows when to slow you down, that can say, in effect, “I have an answer, but this image is the kind where I'm often wrong, so don't wire a pump to this one.”

That's what the confidence-versus-reliability distinction is really about. Confidence is the model talking about itself. Reliability is the system telling you whether to listen. For anything beyond a human reading a single result on a screen – and especially for automation that acts without a person in the loop – reliability is the number to build on.

PlantLab is free to try at plantlab.ai. Three diagnoses a day, results in milliseconds. Every diagnosis returns a reliability score; the full API documentation lives at plantlab.ai/docs.

FAQ

What's the difference between confidence and reliability?

Per-class confidence is how strongly the model picked a specific condition. Reliability is how trustworthy the whole diagnosis is on this particular image. A model can be highly confident in a wrong answer on an ambiguous photo – which is exactly why you want a separate reliability signal for automation decisions.

Which signal should I use to trigger an automation?

Reliability. Gate the automation on the reliability score (act high, verify middle, don't automate low), then use per-class confidence for the details of what to display or log. Gating on confidence alone will take action on hard photos where confidence is high but the answer isn't trustworthy.

What thresholds should I use?

A reasonable starting point is above 0.7 for automatic action, 0.3 to 0.7 for “ask for another photo or a human check,” and below 0.3 for “don't automate.” These are starting values – tune them to how costly a wrong action is in your setup.

What if the reliability score is missing?

It's omitted when there's no condition diagnosis to score – a non-cannabis photo or a healthy plant. Treat absence as “no score available,” not as a low score, and handle it explicitly in your code.

Does a high confidence number mean the diagnosis is correct?

No. Confidence reflects how strongly the model leaned toward an answer, not whether the answer is right. On clear photos the two usually agree; on ambiguous ones they can diverge, which is the whole reason reliability exists as a separate signal.

Related reading: – How PlantLab Knows When It Might Be Wrong: The reliability_score Field – The schema change and one-line migration – How PlantLab's AI Diagnoses 31 Cannabis Plant Problems in 18 Milliseconds – The pipeline behind the API – Build an Autonomous Plant Health Monitor with AI + Home Assistant – Where reliability thresholds earn their keep

Why Phonics Works So Well: The Cognitive Science Behind the I Love Learning Centre's Approach

For decades, educators debated the best way to teach reading. The “whole language” approach emphasised meaning and context, encouraging children to recognise words as whole units. The phonics approach emphasised the systematic relationship between letters and sounds, teaching children to decode words from their component parts.

The debate has been settled. A comprehensive body of research — including major studies in the United Kingdom, the United States, and Australia — has demonstrated that systematic phonics instruction produces superior reading outcomes, particularly for children who are learning English as a second language and for children from disadvantaged backgrounds.

The cognitive science behind this finding is straightforward. The human brain is not naturally wired for reading. Unlike spoken language, which children acquire through exposure, reading must be explicitly taught. The most efficient way to teach it is to help children understand the code that connects written symbols to spoken sounds. This is what phonics does.

For children at the I Love Learning Education and Training Centre in Changtu County, phonics is particularly well-suited to their needs. Most of these children have never heard English spoken at home. They cannot rely on intuition or context to guess at words they have never encountered. They need a reliable decoding system, and phonics provides it.

The Centre's phonics programme is systematic, starting with the simplest letter-sound correspondences and building progressively to complex multi-letter patterns. Children practise each new pattern until it is automatic, then move on. The approach ensures that no child is left behind and that every child builds a solid foundation.

A donation of £19 provides a one-month phonics-based English scholarship. Support an approach that the science confirms works.

Support evidence-based reading instruction

The Snatchers

from  hex_m_hell

hex_m_hell

There was, at that time, in the Hinterland, a quite strict tradition by which a chieftain may be selected to rule the Federation of Fifty. In the early days the great house would be packed with delegates. The council of elders would choose from among the people of the federation those they felt most suited to rule, and delegates would choose from among them.

Now, it was known, that some people of the Fifty could not send delegates. There was much argument over who may be a delegate, about who may be selected. Some, speaking in whispers, said that only nobles were ever chosen to compete. They said that it was blood, not skill, that gained one access to the houses of the elders.

But the elders were deft and chose with skill. Though many grew to see it later, they had not seen it in those early days.

But over time, the houses of the elders grew senile and foolish. They grew too feeble to hide their intentions, and delegates grew weary. It is by this way that the great fool, Dothur the Orange, bringer of misery, was chosen.

He was unskilled at all things, and unwise in all matters. Some elders thought that this would make him easy to control. Perhaps he was, but he brought chaos and ruin on the land. The people prayed for his death, and some plotted it.

One day a man, full of rage, jumped at Dothur and cut off his ear. Guards killed the man, but Dothur knew he had to do something more to protect himself.

In the Valley of Shadows there is a ring of stones. When the sun is still low, a mist flows through the valley. On evenings when this mist stays until it greets the moon, the ring in the moonlight mist opens the veil between the world. Through this veil, one may call things in from the other side.

It is here that Dothur, to save his throne, called on the Snatchers offering the blood of the man who attacked him. He made a deal, sealed also with his own blood, that they may feed on the people of the Fifty. So long as they were fed, the Snatchers would remain on this side. They would share with him of the life they stole. So long as they remained, he could not die. So long as he lived, he believed, he would rule.

The first sacrifices were easy. The Fifty, over generations, had brought great ruin on their neighbors. They would raid and sew fields with salt, so that people would flee villages on their borders into the land of the Fifty. Once inside, they could be made slaves. Though the practice was forbidden by law, few had questioned it. The children born to slaves of the Fifty were free if born on land within the Federation.

So it was through the right of chieftain's corvée that Dothur began to order slaves into the night, that the Snatchers may take them and feast. But these slaves tilled fields, cared for sheep and cattle, and processed fish and grain. As bellies grew empty, people began to question what was happening. Dothar made another deal that the Snatchers should poison the minds of the people against this, for a price.

Then was spread among the people that the free children of slaves had brought this pestilence, that they should become slaves to cover the lost work. But through corvée, once more, many freemen were sent to the Snatchers to pay both the original and the new debt.

Some spoke against this, saying that the ancient code was being ignored, that none born free within the Federation may be held in service without pay, but for the one moon corvée in every six. But the magic of the Snatchers was still fresh with blood, and those who spoke were captured. They were accused of being escaped slaves, even those who could trace their blood-line to the land before the Federation was formed.

The Snatchers came in the night for so many, and yet always hungered for more. The pestilence grew, as did the anger of the people. Dothur ordered pens to be built on the outskirts of the town to be filled with adult descendants of slaves, as many as five generations back, but even then few were left to fill them. When he demanded the children into the pens, some of the people grew furious. Dothur's soldiers filled the pens with children, but people of the villages came out to watch them through the night.

It was on one such night that the Snatchers began to take these guards. For those who saw this, the spell was broken, and their eyes could not again be closed. More came to guard, more were taken, both children and guards. The Snatchers struggled against the guards, and hungered. As they hungered, their magic weakened.

All magic takes balance. But what is the balance of maintaining power against a growing resistance? It takes growing force. Each life stolen touches another, grows resistance further, and calls for more life in return. The Snatchers must be feed, they must feed Dothur, and so there can never be a lasting balance. This magic is as a wave, building and curling, growing tall and monstrous, even as it prepares to crash and recede back to the sea.

With open eyes, villagers flooded to the houses of the elders. They demanded Dothur be removed. Though there were many ways by which the elders could do so, they claimed they could not. Their minds had grown weak as a vision of sagging skin on fragile bones, threatening to buckle at the slightest breeze.

Many insisted that the elders must fix this, that it was the only way. Some elders spoke out, saying that Dothar had brought a curse on the land. But even as their words were pointed, their actions were dull.

Others saw the folly relying on the elders. Instead, they gathered together may of the peoples who lived on Federation land, and shared together the deep and ancient magic that each had to bring. Through this they came to learn about the deal with the Snatchers, to learn that the elders had grown weak enough that they themselves been poised this magic, and they saw a course of action.

In the night they came together to the great house with spears in hand. They made their case to the guards who stood watch, and ran through those who resisted. None bothered wake Dothar, though some tried. There weren't enough soldiers who cared to pass on the message so it died quickly. Most guards joined the people's push inside the house.

In the inner hold of the great hall, within the walls that protected the great house, Dothar woke to hands. He thrashed and kicked, but was bound to a great pole and carried to the wicked forest. There he was left, bound, and his belly slit open. As night fell, the wolves came and ripped at his flesh. But the Snatchers kept their word, so he did not die. For 47 days writhed in agony as he slowly grew back his organs, muscle, and skin. For 47 nights, wolves returned, again and again, to feast on his flesh.

So they held their promise, until the mist came again to meet the moon in the Valley of Shadows at the ring of stones. Then they slipped back through the veil between worlds once more, to await the next fool who would make a deal with them.

Long-Term vs Short-Term: Why Sustained Educational Interventions Outperform Brief Ones

International development is full of short-term projects. A visiting expert conducts a workshop. A charity distributes supplies. A well-meaning organisation runs a summer programme. These interventions are not without value, but their impact is inherently limited. When the project ends, the benefits often fade.

The I Love Learning Education and Training Centre in Changtu County represents a different model. Since its founding in 2012, it has been a permanent presence in the community — not a project with an end date, but an institution with a future.

The evidence for sustained intervention is compelling. Research in education consistently finds that the duration of a programme is one of the strongest predictors of its impact. A child who receives one year of quality English instruction benefits. A child who receives five years benefits far more — not just additively, but multiplicatively, as each year builds on the foundation of the previous ones.

The Centre's fourteen-year history allows it to see the full arc of its impact. Children who entered the Centre as beginners are now university graduates. Some have returned as teachers. Others are building careers and families. The Centre has data spanning more than a decade that demonstrates the cumulative effect of sustained instruction.

Sustainability requires resources. The Centre's operational costs — teacher salaries, facility maintenance, teaching materials — must be covered year after year. The scholarship programme must be funded continuously, not intermittently. This is why recurring donations and long-term donor commitments are so important.

A one-time donation of £19 provides a one-month scholarship. A sustained commitment to giving amplifies that impact over years, contributing to the kind of long-term change that short-term projects cannot achieve.

Commit to sustained support for rural education

Trust Across Cultures: How the I Love Learning Centre Built Strong Community Relationships

Any organisation that operates in a community that is not its founders' place of origin faces a challenge: building trust. Without trust, even the best-designed programmes will struggle. With trust, even modest resources can achieve remarkable results.

The I Love Learning Education and Training Centre in Changtu County has built trust across cultural lines — between Irish founders and Chinese communities, between international teachers and local families, between an externally funded organisation and the people it serves. This trust did not develop overnight. It was earned, gradually and deliberately, over fourteen years.

The process began with presence. Pat and Chang McCarthy, the Centre's founders, did not create the organisation from a distance. They moved to Changtu County. They lived in the community. They sent their own children to local schools. They became neighbours, not just service providers.

The process continued with listening. The Centre's programmes were not designed in a boardroom and imposed on the community. They were developed in consultation with local educators, families, and officials. The curriculum was adapted to the specific needs of Chinese learners. The scholarship criteria reflected community priorities. The Centre became known as an organisation that asked before it acted.

The process was reinforced by consistency. The Centre has operated continuously since 2012. It has survived winters, funding challenges, and the disruptions that affected so many other organisations. Families in Changtu County know that the Centre will be there tomorrow, next month, and next year. That reliability builds trust.

Trust is sustained by transparency. The Centre's financial reports are publicly available through GlobalGiving. Its quarterly donor updates detail how funds are used and what impact they achieve. There are no hidden agendas, no undisclosed funding sources, no unaccountable decisions.

A donation of £19 provides a one-month scholarship. You are supporting an organisation that has earned the trust of the community it serves.

Support an organisation built on trust

A Mother Chooses Education: When Rural Chinese Women Prioritise Their Daughters' Learning

In many parts of the world, mothers are the most powerful advocates for their children's education. Rural China is no exception. Despite economic pressures, cultural expectations, and the competing demands of daily survival, millions of rural Chinese mothers make extraordinary sacrifices to keep their children — and particularly their daughters — in school.

The I Love Learning Education and Training Centre in Changtu County has witnessed this commitment firsthand. Mothers who cannot read English themselves sit with their children as they practise vocabulary. Mothers who work twelve-hour days set aside a portion of their earnings for educational expenses. Mothers who never had the opportunity to attend secondary school are determined that their daughters will.

This maternal commitment is one of the reasons the Centre maintains its equal-scholarship policy. Co-founder Chang McCarthy, who leads the Changtu Women's Federation, has been a driving force behind the policy. She understands that in households where resources are limited, a scholarship designated for a girl can be the factor that keeps her in school when her education might otherwise be deprioritised.

The effects are intergenerational. A mother who fought for her daughter's education is more likely to have grandchildren who are educated. A daughter who completes her schooling is more likely to advocate for her own children's learning. The decision to prioritise education echoes across decades.

The Centre supports this maternal advocacy by keeping costs low and scholarships accessible. No child is turned away because their family cannot pay. The scholarship programme, funded by donations from around the world, ensures that a mother's commitment to her child's education is matched by the opportunity to fulfil it.

A donation of £19 provides a one-month scholarship. You are supporting not just a child, but the mother who believes in her.

Support the mothers who prioritise education

靴下の

from 下川友

梅雨に入ったというのに、その日の夕方は雨が降る予報だったにもかかわらず、結局降らなかった。降るはずだと思い込んでいた空は肩透かしを食わせるように明るく、窓の外から差し込むオレンジ色の光は必要以上に濃かった。部屋の壁や机の角を照らしながら、今にも皮膚を焼いてしまいそうな、もちろん実際には焼かれないのだが、そのような色をしていた。

近所のスーパーが長い休みを終えて営業を再開した。帰り道に立ち寄ると、冷凍ケースにはメロンボールが並んでいた。隣にはスイカボールもある。やはり見た目がかわいい。

ネットで買った、フジロックへ持っていくための水色のレインコートが届いていた。妻によく似合っていた。

平日は本当に仕事だけだ。朝、電車に乗り、夜に帰る。妻と離れて過ごす時間も多い。窓に映る顔はいつも少し疲れている。ここからしばらくは抜け出せないだろうという感覚が、路線図のように頭の中へ張り付いていた。

それでも家に帰れば、小さな出来事は続いている。焼きそばに目玉焼きを乗せた夜、その組み合わせには確かな相乗効果があった。塩の水まんじゅうは美味しい。誕生日にはレアチーズケーキを作ってくれて、上に乗ったブルーベリーは毎年変わらず添えられている。

誕生日には妻がぬいぐるみも作ってくれた。靴下で作られたものだ。夜にはタコスも作ってくれた。トマト、レタス、チーズ、タコスミート、それに少し辛いソース。自分の誕生日は幸せだったと思う。

少し前には友達と楽器の演奏会をした。何時間も音を出し続け、耳の奥に振動が残ったまま帰宅した。中野では友達とうどんを食べ、オムライスとカレーのあいがけも食べた。どれも楽しく、美味しかった。その帰り道には決まったように眠気がやってきた。

このまま、好きなことを続けられるだけの体力をつけていきたい。

That guy

from An Open Letter

I don’t want to come off as cocky, but in the last few months I feel like I have had an incredible amount of success with women, in terms of people being interested in me. I haven’t even gone on dating apps yet, and my friend pointed out how she has never seen a guy get this much attention from women. I feel like in hindsight if I try to think about what advice I would even give myself or something like that, it’s difficult because I essentially didn’t really take any shortcuts. I did not try to catch any butterflies, I instead continued to build my garden. I did however do things like plant things that butterflies like, but mostly with the intention of me enjoying my garden. And I think a lot of other people are interested in that. Things are working out.

My greatest real underlying motivation is

from sugarrush-77

Wanting to create something beautiful and great. That’s where the startup dream comes from the videogame stuff the fiction stuff everything from that central desire and the fact that ill regret if i dont

floor crossers

from Things Left Unsaid

I am conflicted about the elected people who have crossed the floor to join the left. Five times now, and the rumour is that there are more to come. Part of me finds it quite offensive. It seems like such a non-democratic thing to do. A total betrayal to the voters who trusted them enough to go out and vote for them. At the same time though, another part of me is glad that it keeps happening. I might even say I find it funny. Really no different than how the right would feel if this was occurring in an alternate universe in the opposite direction.

There are opinions about how our rockstar PM has stolen the majority government (that he now has) by manipulating those MP's into joining his side. It's so ridiculous. No matter what propaganda they try to shove down our throats next, I will never believe it. Like what crap are they going to come up with next? How he used hypnosis, bribery, threats, torture, witchcraft, or maybe that he gave them all foot massages while his wife fed them grapes, or what? Stolen illegitimate majority, they say. Sure. Grow up. Welcome to politics.

The ones who crossed the floor are all educated, grown adults in government positions, making their own political career decisions. They made the decision to cross the floor all on their own. We see the outcome, but not the process, and we can only speculate about the reasons that brought them to make that final personal decision. They could have changed their minds and backed out up to a certain point in time when there would be no turning back. I don't imagine the journey to that point of no return was an easy one. They got there. They followed through.

The reasons for it happening are sort of irrelevant. I can’t help thinking that if they were satisfied with their original party they wouldn’t have even considered switching. It happened. Now we wait and see. Now the leadership is driving the bus. A majority opposition had the option to grab the steering wheel and throw them off course. A minority opposition is more like an unruly teenager near the back of the bus causing a distraction.

Less of a threat, and more of an annoyance than anything. Are we there yet? Why aren't we there yet? Hurry up. You're going the wrong way. Why aren't we there yet? Are we there yet? What are you doing? We're going to crash. Where are you taking us? Why are we not there yet? This is stupid. What are you doing? Where are we? This is the wrong road. That was the wrong turn. Why aren't we there yet? Are we there yet? And they haven't even left the driveway. The job of the opposition is to convince the other passengers that we all need to assume and fear that the driver is inevitably going to fail and run us off a cliff.

That is a simplistic spin on a complicated matter. I guess I'm just a passenger hoping the driver is going to get us where we want to be. The right would say that it is foolish to have a little faith that just maybe the leader and his government might know what they are doing.

I think this is a very volatile time in the world of politics, not just here, but everywhere, and I don't think it is constructive to assume or fear anything, or to expect instantaneous results.

from Unvarnished diary of a lill Japanese mouse

JOURNAL 10 juin 2026

Pour le moment je vois pas trop la lumière au bout du chemin. On mène une vie qui nous plaît pas. C’est tout à fait, en un peu mieux, la vie japonaise standard : boulot dodo boulot. On est privilégiées, on a des moments libres que beaucoup n'ont pas, mais ils nous servent surtout à voir notre aliénation le reste du temps.

Bien sur on fait des choses qui nous plaisent, d'accord, mais c'est pas ce qu'on aimerait en vrai. On veut pas attendre d'être vieilles, c’est trop triste, notre jeunesse s'évapore. Bientôt il ne restera rien, le bol sera vide on sera sèches.

Ah ! encore un an avant que ma chérie puisse demander la nationalité japonaise, faut être patientes. Mais quand même, attendre toujours attendre, le temps passe c’est comme un train, si tu montes pas dedans, il part sans toi.

The Europe–China Monitor

The Europe–China Monitor