Want to join in? Respond to our weekly writing prompts, open to everyone.

A day in paradise

from  The happy place

The happy place

I’m alone in the half renovated apartment

The dogs were happy to see me and are now waiting for food.

We went out for a walk just before a whole rainy day’s worth of water fell down, I could see it and hear it through the window

A type of weather associated with doomsdays but now again the sun is shining

Just like that, a fit of rage from up above or something, then it’s like nothing happened,

But the ground is wet

And i’m making food also for myself; I’m about to eat some stuff i’ve found in the freezer; an assortment of random food from all over the world

And I’ve got some tzatziki still

I ate for lunch too

I bet I smelt of garlic for my new colleagues

This amuses me somewhat

Day 0

from Out of Office

I did not think today would actually ever come. I thought the situation would change and the “last day” could be avoided. It feels weird not having control of the circumstances. I can’t tell if I feel relief or nervousness about potentially having to start over…

I have not thought about what to tell people when they ask why I am not working. I am sure I will come up with something vague, but I actually have to pack up my things now. That feels odd. I don’t feel like I am finished here, maybe I can come back once things clear up, but I am also curious about trying something new. I have so many ideas but I struggle with bringing them to fruition.

I definitely need to refocus on my health. My sleeping and eating habits are not good and are affecting me in more ways than just feeling tired or hungry. I have regained weight I had already lost and I am positive my cortisol levels have shot high. I need to take care of myself so that will be my first task.

I may not know what the next few weeks will bring, but at least I know what I can do in the meantime.

Education as a National Priority: How English Proficiency Supports China's Development Goals

China's remarkable economic transformation over the past four decades is well documented. Less discussed, but equally important, is the role that education — and specifically English education — plays in the country's ongoing development strategy.

English proficiency is not a niche skill in modern China. It is embedded in the national education system as a core subject from primary school through university. It is a required component of the Gaokao, the national university entrance examination. It is valued by employers across virtually every sector of the economy. And it is recognised by policymakers as essential for China's continued integration with global markets, scientific communities, and diplomatic networks.

The Chinese government has invested significantly in expanding educational access in rural areas. School construction, teacher training, and technology deployment have all been priorities. Yet the gap between rural and urban English education remains substantial, reflecting deeper structural challenges: the difficulty of recruiting and retaining qualified English teachers in remote areas, the lack of English-language environments outside the classroom, and the economic pressures that force rural families to prioritise immediate income over long-term educational investment.

The I Love Learning Education and Training Centre in Changtu County complements these national efforts by providing targeted, community-based English instruction that addresses the specific needs of rural learners. Its phonics-based approach, international teaching staff, and scholarship programme represent a model of how non-governmental organisations can work constructively within China's educational framework to reach underserved populations.

The Centre's work aligns with broader national priorities: reducing poverty through education, narrowing the urban-rural development gap, and preparing the next generation for participation in an increasingly interconnected global economy.

A donation of £19 provides a one-month scholarship. Support a model that advances national goals while transforming individual lives.

Support education that serves China's future

Confidence Is Not Reliability: Trust Signals for Automated Plant Diagnosis

from  PlantLab.ai | Blog

PlantLab.ai | Blog

The Short Version

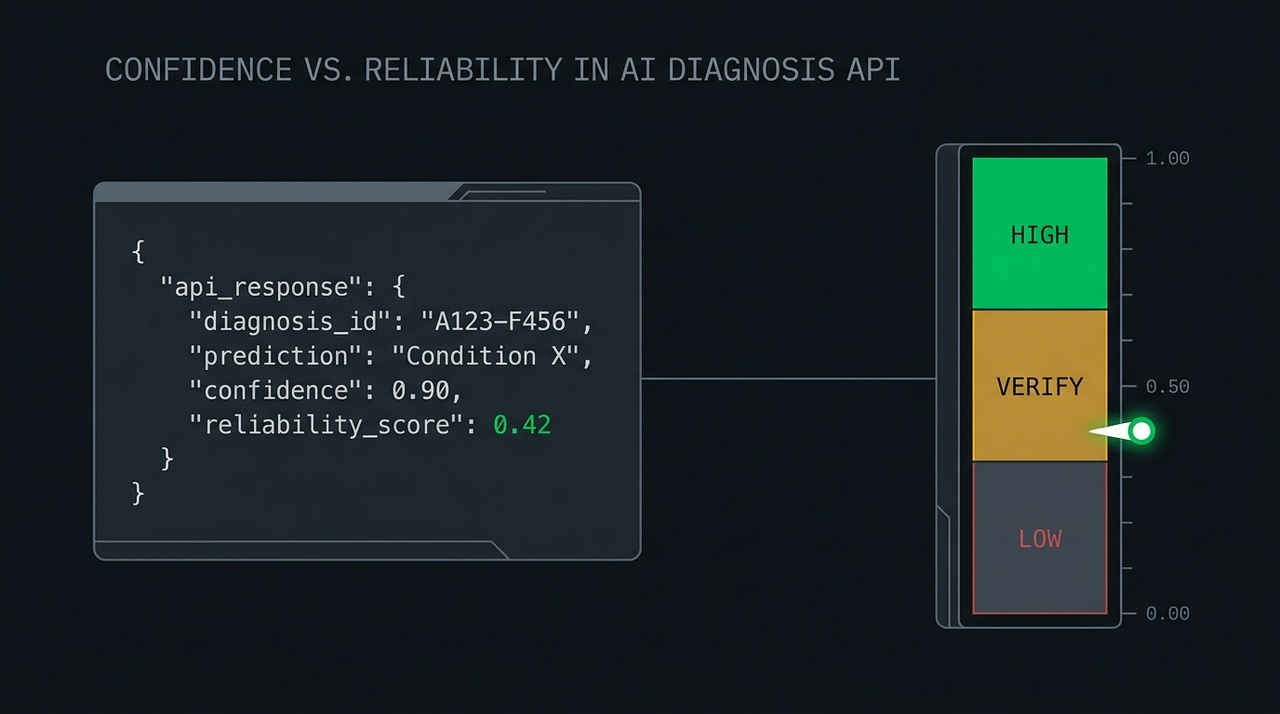

Most plant diagnosis tools show you a confidence number. Confidence tells you how strongly the model picked an answer – not whether you should act on it. Those are different questions, and on a hard photo they get different answers: a model can be very confident and flat wrong. If you're feeding a diagnosis into an automation – a Home Assistant flow, a grow-room controller, a dashboard alert – the signal you actually want is reliability: how trustworthy is this answer, on this specific image? PlantLab returns both. Here's how to use each, and why automation should gate on reliability, not confidence.

The failure mode nobody warns you about

Here's the trap. You wire a plant diagnosis API into an automation. When the model says “magnesium deficiency” with 0.9 confidence, you trigger an action – a notification, a nutrient-dosing routine, a log entry. It works in testing. The clear photos you tested with all return high confidence and correct answers.

Then a real photo arrives: backlit, half in shadow, two plants in frame, taken at midnight because something looked off. The model returns “magnesium deficiency” at 0.9 confidence again. Except this time it's wrong, because that confidence number was never a promise about correctness. It was a statement about how strongly the model leaned toward one class. On an ambiguous image, the model can lean hard in the wrong direction and report a high number while doing it.

A confidence score that's only trustworthy on easy photos isn't a trust signal. It's a confidence display. And the cases where you most need to know whether to trust an answer are exactly the cases where raw confidence is least informative.

This isn't hypothetical. A wave of consumer photo-diagnosis apps has shipped in the last year, and most of them do the same thing: wrap a general-purpose vision model and print a “confidence” percentage next to the answer. The problem is that a general-purpose model handed a plant photo will produce a confident-looking number whether or not it has any real basis for the call – the percentage reflects how hard the model leaned, not how often it's right when it leans that hard. A confidence figure with nothing calibrating it against actual outcomes is decoration. It looks like a trust signal and behaves like one right up until the photo gets hard, which is the moment you needed it most.

Three different questions

The fix is to stop treating “confidence” as one signal and recognize that an automated diagnosis is really answering three separate questions.

| Signal | The question it answers | Where it lives |

|---|---|---|

| Per-class confidence | “How strongly did the model pick each condition?” | confidence on each item in conditions[] |

| Reliability | “Should this whole diagnosis be acted on, on this image?” | reliability_score on the response |

| Policy threshold | “What does my system do at each trust level?” | Your code |

Per-class confidence is the model's strength of preference for a specific condition. It's useful when you want to know what the model saw and how it ranked the possibilities. It is not a verdict on whether the overall answer is safe to act on.

Reliability is a single number from 0 to 1 that estimates how trustworthy the entire diagnosis is on this particular image. It's the signal that stays informative on the hard cases – the ambiguous, badly-lit, multi-plant photos where you most need to know whether to trust the result. Higher is better. Low means “something about this image makes the answer shaky, double-check before acting.”

The policy threshold is yours. The API gives you a number; your system decides what to do at each level. That decision is a product choice, not a model output, and it's where the actual safety lives.

Wiring automation to the right signal

The practical rule for any automation: branch on reliability, then use per-class confidence for the details.

A reasonable starting policy, which you should tune to your own tolerance for false actions:

- High reliability (say, above 0.7): safe to act automatically. Update the dashboard, log the plant state, trigger the routine. This is the band where automating saves you work without costing you trust.

- Middle reliability (roughly 0.3 to 0.7): don't act blind. This is the band to ask for a second photo, queue the case for a human glance, or surface a “check this one” flag instead of firing an action.

- Low reliability (below 0.3): do not automate. Show the result as informational at most. Acting on a low-reliability diagnosis is how an automation does the wrong thing confidently.

The reason this ordering matters: per-class confidence will happily hand you a high number in all three bands. Reliability is what separates them. If you gate automation on confidence alone, you'll take action on the 2 AM backlit photo. If you gate on reliability, that photo lands in the “ask for another shot” band where it belongs.

For an integrator, this also means you don't have to build your own uncertainty handling from scratch. The hard part – estimating whether an answer holds up on a given image – is in the response. Your job is to pick the cutoffs that match what your automation does when it's wrong.

Why PlantLab returns a single reliability number

PlantLab used to return rule-based trust fields derived from the model's confidences. Those were removed in favor of a single learned reliability signal, and the API schema was bumped to make the change loud rather than silent. The full migration writeup is linked below.

The short version of why: a trust signal assembled from a handful of rules works on easy cases and falls apart on hard ones, which is the opposite of what you want. A trust signal is only earning its keep on the ambiguous cases – the ones where the answer genuinely might be wrong. So PlantLab consolidated to one number, on one contract: a 0-to-1 reliability score, present whenever the API returns a condition diagnosis, designed to stay honest on the cases that matter.

One implementation note worth knowing: reliability is omitted when there's no condition diagnosis to score – for a non-cannabis photo, or a healthy plant. Treat a missing score as “no score available,” not as a low score. Your automation logic should handle absence explicitly rather than defaulting it to zero.

The product principle underneath

A diagnosis API that always answers, always confidently, is easy to build and dangerous to automate against. The harder thing – and the one I think actually matters – is an API that knows when to slow you down, that can say, in effect, “I have an answer, but this image is the kind where I'm often wrong, so don't wire a pump to this one.”

That's what the confidence-versus-reliability distinction is really about. Confidence is the model talking about itself. Reliability is the system telling you whether to listen. For anything beyond a human reading a single result on a screen – and especially for automation that acts without a person in the loop – reliability is the number to build on.

PlantLab is free to try at plantlab.ai. Three diagnoses a day, results in milliseconds. Every diagnosis returns a reliability score; the full API documentation lives at plantlab.ai/docs.

FAQ

What's the difference between confidence and reliability?

Per-class confidence is how strongly the model picked a specific condition. Reliability is how trustworthy the whole diagnosis is on this particular image. A model can be highly confident in a wrong answer on an ambiguous photo – which is exactly why you want a separate reliability signal for automation decisions.

Which signal should I use to trigger an automation?

Reliability. Gate the automation on the reliability score (act high, verify middle, don't automate low), then use per-class confidence for the details of what to display or log. Gating on confidence alone will take action on hard photos where confidence is high but the answer isn't trustworthy.

What thresholds should I use?

A reasonable starting point is above 0.7 for automatic action, 0.3 to 0.7 for “ask for another photo or a human check,” and below 0.3 for “don't automate.” These are starting values – tune them to how costly a wrong action is in your setup.

What if the reliability score is missing?

It's omitted when there's no condition diagnosis to score – a non-cannabis photo or a healthy plant. Treat absence as “no score available,” not as a low score, and handle it explicitly in your code.

Does a high confidence number mean the diagnosis is correct?

No. Confidence reflects how strongly the model leaned toward an answer, not whether the answer is right. On clear photos the two usually agree; on ambiguous ones they can diverge, which is the whole reason reliability exists as a separate signal.

Related reading: – How PlantLab Knows When It Might Be Wrong: The reliability_score Field – The schema change and one-line migration – How PlantLab's AI Diagnoses 31 Cannabis Plant Problems in 18 Milliseconds – The pipeline behind the API – Build an Autonomous Plant Health Monitor with AI + Home Assistant – Where reliability thresholds earn their keep

Why Phonics Works So Well: The Cognitive Science Behind the I Love Learning Centre's Approach

For decades, educators debated the best way to teach reading. The “whole language” approach emphasised meaning and context, encouraging children to recognise words as whole units. The phonics approach emphasised the systematic relationship between letters and sounds, teaching children to decode words from their component parts.

The debate has been settled. A comprehensive body of research — including major studies in the United Kingdom, the United States, and Australia — has demonstrated that systematic phonics instruction produces superior reading outcomes, particularly for children who are learning English as a second language and for children from disadvantaged backgrounds.

The cognitive science behind this finding is straightforward. The human brain is not naturally wired for reading. Unlike spoken language, which children acquire through exposure, reading must be explicitly taught. The most efficient way to teach it is to help children understand the code that connects written symbols to spoken sounds. This is what phonics does.

For children at the I Love Learning Education and Training Centre in Changtu County, phonics is particularly well-suited to their needs. Most of these children have never heard English spoken at home. They cannot rely on intuition or context to guess at words they have never encountered. They need a reliable decoding system, and phonics provides it.

The Centre's phonics programme is systematic, starting with the simplest letter-sound correspondences and building progressively to complex multi-letter patterns. Children practise each new pattern until it is automatic, then move on. The approach ensures that no child is left behind and that every child builds a solid foundation.

A donation of £19 provides a one-month phonics-based English scholarship. Support an approach that the science confirms works.

Support evidence-based reading instruction

The Snatchers

from  hex_m_hell

hex_m_hell

There was, at that time, in the Hinterland, a quite strict tradition by which a chieftain may be selected to rule the Federation of Fifty. In the early days the great house would be packed with delegates. The council of elders would choose from among the people of the federation those they felt most suited to rule, and delegates would choose from among them.

Now, it was known, that some people of the Fifty could not send delegates. There was much argument over who may be a delegate, about who may be selected. Some, speaking in whispers, said that only nobles were ever chosen to compete. They said that it was blood, not skill, that gained one access to the houses of the elders.

But the elders were deft and chose with skill. Though many grew to see it later, they had not seen it in those early days.

But over time, the houses of the elders grew senile and foolish. They grew too feeble to hide their intentions, and delegates grew weary. It is by this way that the great fool, Dothur the Orange, bringer of misery, was chosen.

He was unskilled at all things, and unwise in all matters. Some elders thought that this would make him easy to control. Perhaps he was, but he brought chaos and ruin on the land. The people prayed for his death, and some plotted it.

One day a man, full of rage, jumped at Dothur and cut off his ear. Guards killed the man, but Dothur knew he had to do something more to protect himself.

In the Valley of Shadows there is a ring of stones. When the sun is still low, a mist flows through the valley. On evenings when this mist stays until it greets the moon, the ring in the moonlight mist opens the veil between the world. Through this veil, one may call things in from the other side.

It is here that Dothur, to save his throne, called on the Snatchers offering the blood of the man who attacked him. He made a deal, sealed also with his own blood, that they may feed on the people of the Fifty. So long as they were fed, the Snatchers would remain on this side. They would share with him of the life they stole. So long as they remained, he could not die. So long as he lived, he believed, he would rule.

The first sacrifices were easy. The Fifty, over generations, had brought great ruin on their neighbors. They would raid and sew fields with salt, so that people would flee villages on their borders into the land of the Fifty. Once inside, they could be made slaves. Though the practice was forbidden by law, few had questioned it. The children born to slaves of the Fifty were free if born on land within the Federation.

So it was through the right of chieftain's corvée that Dothur began to order slaves into the night, that the Snatchers may take them and feast. But these slaves tilled fields, cared for sheep and cattle, and processed fish and grain. As bellies grew empty, people began to question what was happening. Dothar made another deal that the Snatchers should poison the minds of the people against this, for a price.

Then was spread among the people that the free children of slaves had brought this pestilence, that they should become slaves to cover the lost work. But through corvée, once more, many freemen were sent to the Snatchers to pay both the original and the new debt.

Some spoke against this, saying that the ancient code was being ignored, that none born free within the Federation may be held in service without pay, but for the one moon corvée in every six. But the magic of the Snatchers was still fresh with blood, and those who spoke were captured. They were accused of being escaped slaves, even those who could trace their blood-line to the land before the Federation was formed.

The Snatchers came in the night for so many, and yet always hungered for more. The pestilence grew, as did the anger of the people. Dothur ordered pens to be built on the outskirts of the town to be filled with adult descendants of slaves, as many as five generations back, but even then few were left to fill them. When he demanded the children into the pens, some of the people grew furious. Dothur's soldiers filled the pens with children, but people of the villages came out to watch them through the night.

It was on one such night that the Snatchers began to take these guards. For those who saw this, the spell was broken, and their eyes could not again be closed. More came to guard, more were taken, both children and guards. The Snatchers struggled against the guards, and hungered. As they hungered, their magic weakened.

All magic takes balance. But what is the balance of maintaining power against a growing resistance? It takes growing force. Each life stolen touches another, grows resistance further, and calls for more life in return. The Snatchers must be feed, they must feed Dothur, and so there can never be a lasting balance. This magic is as a wave, building and curling, growing tall and monstrous, even as it prepares to crash and recede back to the sea.

With open eyes, villagers flooded to the houses of the elders. They demanded Dothur be removed. Though there were many ways by which the elders could do so, they claimed they could not. Their minds had grown weak as a vision of sagging skin on fragile bones, threatening to buckle at the slightest breeze.

Many insisted that the elders must fix this, that it was the only way. Some elders spoke out, saying that Dothar had brought a curse on the land. But even as their words were pointed, their actions were dull.

Others saw the folly relying on the elders. Instead, they gathered together may of the peoples who lived on Federation land, and shared together the deep and ancient magic that each had to bring. Through this they came to learn about the deal with the Snatchers, to learn that the elders had grown weak enough that they themselves been poised this magic, and they saw a course of action.

In the night they came together to the great house with spears in hand. They made their case to the guards who stood watch, and ran through those who resisted. None bothered wake Dothar, though some tried. There weren't enough soldiers who cared to pass on the message so it died quickly. Most guards joined the people's push inside the house.

In the inner hold of the great hall, within the walls that protected the great house, Dothar woke to hands. He thrashed and kicked, but was bound to a great pole and carried to the wicked forest. There he was left, bound, and his belly slit open. As night fell, the wolves came and ripped at his flesh. But the Snatchers kept their word, so he did not die. For 47 days writhed in agony as he slowly grew back his organs, muscle, and skin. For 47 nights, wolves returned, again and again, to feast on his flesh.

So they held their promise, until the mist came again to meet the moon in the Valley of Shadows at the ring of stones. Then they slipped back through the veil between worlds once more, to await the next fool who would make a deal with them.

Long-Term vs Short-Term: Why Sustained Educational Interventions Outperform Brief Ones

International development is full of short-term projects. A visiting expert conducts a workshop. A charity distributes supplies. A well-meaning organisation runs a summer programme. These interventions are not without value, but their impact is inherently limited. When the project ends, the benefits often fade.

The I Love Learning Education and Training Centre in Changtu County represents a different model. Since its founding in 2012, it has been a permanent presence in the community — not a project with an end date, but an institution with a future.

The evidence for sustained intervention is compelling. Research in education consistently finds that the duration of a programme is one of the strongest predictors of its impact. A child who receives one year of quality English instruction benefits. A child who receives five years benefits far more — not just additively, but multiplicatively, as each year builds on the foundation of the previous ones.

The Centre's fourteen-year history allows it to see the full arc of its impact. Children who entered the Centre as beginners are now university graduates. Some have returned as teachers. Others are building careers and families. The Centre has data spanning more than a decade that demonstrates the cumulative effect of sustained instruction.

Sustainability requires resources. The Centre's operational costs — teacher salaries, facility maintenance, teaching materials — must be covered year after year. The scholarship programme must be funded continuously, not intermittently. This is why recurring donations and long-term donor commitments are so important.

A one-time donation of £19 provides a one-month scholarship. A sustained commitment to giving amplifies that impact over years, contributing to the kind of long-term change that short-term projects cannot achieve.

Commit to sustained support for rural education

Trust Across Cultures: How the I Love Learning Centre Built Strong Community Relationships

Any organisation that operates in a community that is not its founders' place of origin faces a challenge: building trust. Without trust, even the best-designed programmes will struggle. With trust, even modest resources can achieve remarkable results.

The I Love Learning Education and Training Centre in Changtu County has built trust across cultural lines — between Irish founders and Chinese communities, between international teachers and local families, between an externally funded organisation and the people it serves. This trust did not develop overnight. It was earned, gradually and deliberately, over fourteen years.

The process began with presence. Pat and Chang McCarthy, the Centre's founders, did not create the organisation from a distance. They moved to Changtu County. They lived in the community. They sent their own children to local schools. They became neighbours, not just service providers.

The process continued with listening. The Centre's programmes were not designed in a boardroom and imposed on the community. They were developed in consultation with local educators, families, and officials. The curriculum was adapted to the specific needs of Chinese learners. The scholarship criteria reflected community priorities. The Centre became known as an organisation that asked before it acted.

The process was reinforced by consistency. The Centre has operated continuously since 2012. It has survived winters, funding challenges, and the disruptions that affected so many other organisations. Families in Changtu County know that the Centre will be there tomorrow, next month, and next year. That reliability builds trust.

Trust is sustained by transparency. The Centre's financial reports are publicly available through GlobalGiving. Its quarterly donor updates detail how funds are used and what impact they achieve. There are no hidden agendas, no undisclosed funding sources, no unaccountable decisions.

A donation of £19 provides a one-month scholarship. You are supporting an organisation that has earned the trust of the community it serves.

Support an organisation built on trust

A Mother Chooses Education: When Rural Chinese Women Prioritise Their Daughters' Learning

In many parts of the world, mothers are the most powerful advocates for their children's education. Rural China is no exception. Despite economic pressures, cultural expectations, and the competing demands of daily survival, millions of rural Chinese mothers make extraordinary sacrifices to keep their children — and particularly their daughters — in school.

The I Love Learning Education and Training Centre in Changtu County has witnessed this commitment firsthand. Mothers who cannot read English themselves sit with their children as they practise vocabulary. Mothers who work twelve-hour days set aside a portion of their earnings for educational expenses. Mothers who never had the opportunity to attend secondary school are determined that their daughters will.

This maternal commitment is one of the reasons the Centre maintains its equal-scholarship policy. Co-founder Chang McCarthy, who leads the Changtu Women's Federation, has been a driving force behind the policy. She understands that in households where resources are limited, a scholarship designated for a girl can be the factor that keeps her in school when her education might otherwise be deprioritised.

The effects are intergenerational. A mother who fought for her daughter's education is more likely to have grandchildren who are educated. A daughter who completes her schooling is more likely to advocate for her own children's learning. The decision to prioritise education echoes across decades.

The Centre supports this maternal advocacy by keeping costs low and scholarships accessible. No child is turned away because their family cannot pay. The scholarship programme, funded by donations from around the world, ensures that a mother's commitment to her child's education is matched by the opportunity to fulfil it.

A donation of £19 provides a one-month scholarship. You are supporting not just a child, but the mother who believes in her.

Support the mothers who prioritise education

靴下の

from 下川友

梅雨に入ったというのに、その日の夕方は雨が降る予報だったにもかかわらず、結局降らなかった。降るはずだと思い込んでいた空は肩透かしを食わせるように明るく、窓の外から差し込むオレンジ色の光は必要以上に濃かった。部屋の壁や机の角を照らしながら、今にも皮膚を焼いてしまいそうな、もちろん実際には焼かれないのだが、そのような色をしていた。

近所のスーパーが長い休みを終えて営業を再開した。帰り道に立ち寄ると、冷凍ケースにはメロンボールが並んでいた。隣にはスイカボールもある。やはり見た目がかわいい。

ネットで買った、フジロックへ持っていくための水色のレインコートが届いていた。妻によく似合っていた。

平日は本当に仕事だけだ。朝、電車に乗り、夜に帰る。妻と離れて過ごす時間も多い。窓に映る顔はいつも少し疲れている。ここからしばらくは抜け出せないだろうという感覚が、路線図のように頭の中へ張り付いていた。

それでも家に帰れば、小さな出来事は続いている。焼きそばに目玉焼きを乗せた夜、その組み合わせには確かな相乗効果があった。塩の水まんじゅうは美味しい。誕生日にはレアチーズケーキを作ってくれて、上に乗ったブルーベリーは毎年変わらず添えられている。

誕生日には妻がぬいぐるみも作ってくれた。靴下で作られたものだ。夜にはタコスも作ってくれた。トマト、レタス、チーズ、タコスミート、それに少し辛いソース。自分の誕生日は幸せだったと思う。

少し前には友達と楽器の演奏会をした。何時間も音を出し続け、耳の奥に振動が残ったまま帰宅した。中野では友達とうどんを食べ、オムライスとカレーのあいがけも食べた。どれも楽しく、美味しかった。その帰り道には決まったように眠気がやってきた。

このまま、好きなことを続けられるだけの体力をつけていきたい。

That guy

from An Open Letter

I don’t want to come off as cocky, but in the last few months I feel like I have had an incredible amount of success with women, in terms of people being interested in me. I haven’t even gone on dating apps yet, and my friend pointed out how she has never seen a guy get this much attention from women. I feel like in hindsight if I try to think about what advice I would even give myself or something like that, it’s difficult because I essentially didn’t really take any shortcuts. I did not try to catch any butterflies, I instead continued to build my garden. I did however do things like plant things that butterflies like, but mostly with the intention of me enjoying my garden. And I think a lot of other people are interested in that. Things are working out.

My greatest real underlying motivation is

from sugarrush-77

Wanting to create something beautiful and great. That’s where the startup dream comes from the videogame stuff the fiction stuff everything from that central desire and the fact that ill regret if i dont

floor crossers

from Things Left Unsaid

I am conflicted about the elected people who have crossed the floor to join the left. Five times now, and the rumour is that there are more to come. Part of me finds it quite offensive. It seems like such a non-democratic thing to do. A total betrayal to the voters who trusted them enough to go out and vote for them. At the same time though, another part of me is glad that it keeps happening. I might even say I find it funny. Really no different than how the right would feel if this was occurring in an alternate universe in the opposite direction.

There are opinions about how our rockstar PM has stolen the majority government (that he now has) by manipulating those MP's into joining his side. It's so ridiculous. No matter what propaganda they try to shove down our throats next, I will never believe it. Like what crap are they going to come up with next? How he used hypnosis, bribery, threats, torture, witchcraft, or maybe that he gave them all foot massages while his wife fed them grapes, or what? Stolen illegitimate majority, they say. Sure. Grow up. Welcome to politics.

The ones who crossed the floor are all educated, grown adults in government positions, making their own political career decisions. They made the decision to cross the floor all on their own. We see the outcome, but not the process, and we can only speculate about the reasons that brought them to make that final personal decision. They could have changed their minds and backed out up to a certain point in time when there would be no turning back. I don't imagine the journey to that point of no return was an easy one. They got there. They followed through.

The reasons for it happening are sort of irrelevant. I can’t help thinking that if they were satisfied with their original party they wouldn’t have even considered switching. It happened. Now we wait and see. Now the leadership is driving the bus. A majority opposition had the option to grab the steering wheel and throw them off course. A minority opposition is more like an unruly teenager near the back of the bus causing a distraction.

Less of a threat, and more of an annoyance than anything. Are we there yet? Why aren't we there yet? Hurry up. You're going the wrong way. Why aren't we there yet? Are we there yet? What are you doing? We're going to crash. Where are you taking us? Why are we not there yet? This is stupid. What are you doing? Where are we? This is the wrong road. That was the wrong turn. Why aren't we there yet? Are we there yet? And they haven't even left the driveway. The job of the opposition is to convince the other passengers that we all need to assume and fear that the driver is inevitably going to fail and run us off a cliff.

That is a simplistic spin on a complicated matter. I guess I'm just a passenger hoping the driver is going to get us where we want to be. The right would say that it is foolish to have a little faith that just maybe the leader and his government might know what they are doing.

I think this is a very volatile time in the world of politics, not just here, but everywhere, and I don't think it is constructive to assume or fear anything, or to expect instantaneous results.

from Unvarnished diary of a lill Japanese mouse

JOURNAL 10 juin 2026

Pour le moment je vois pas trop la lumière au bout du chemin. On mène une vie qui nous plaît pas. C’est tout à fait, en un peu mieux, la vie japonaise standard : boulot dodo boulot. On est privilégiées, on a des moments libres que beaucoup n'ont pas, mais ils nous servent surtout à voir notre aliénation le reste du temps.

Bien sur on fait des choses qui nous plaisent, d'accord, mais c'est pas ce qu'on aimerait en vrai. On veut pas attendre d'être vieilles, c’est trop triste, notre jeunesse s'évapore. Bientôt il ne restera rien, le bol sera vide on sera sèches.

Ah ! encore un an avant que ma chérie puisse demander la nationalité japonaise, faut être patientes. Mais quand même, attendre toujours attendre, le temps passe c’est comme un train, si tu montes pas dedans, il part sans toi.

The Companions Placed Along the Road

from Wayfarer's Quill

There are travelers we choose, and travelers we don’t. Yet the longer I walk this winding road, the more I suspect that choice was never the point.

In an older Sunday sermon, Bishop Barron reflected on a simple but unsettling truth: we don’t always get to choose the people we’re called to love. Some arrive like sunlight — easy, warm, familiar. Others enter our lives like weather we didn’t prepare for, testing the seams of our patience and the sturdiness of our compassion.

But what if every person who crosses our path is placed there with intention?

What if each encounter, whether that be pleasant or difficult, is a quiet invitation from God to grow?

Some companions teach us joy. Others teach us endurance. A few teach us forgiveness in ways we would never have chosen. Yet all of them, in their own way, shape the soul of the traveler we are becoming.

So the call is simple, though never easy: Don’t only love the ones who are easy to love. Love the ones you’re given.

For in the great pilgrimage of life, even the difficult companions may be the very ones who carve out deeper wells of grace within us.

#Reflection #BishopBarron #GraceInTheEveryday

The Silence Beside the Workbench

from Douglas Vandergraph

Chapter One

Jesus knelt before dawn with His hands resting open upon His knees, the packed earth cool beneath Him and the house still around Him. Outside, Nazareth had not yet become noise. A rooster called somewhere down the slope, then another answered from farther away, and the dark blue of morning held the village in that brief mercy before burdens remembered their owners. Jesus did not hurry His prayer. He was ten years old, small enough that the world still looked over Him, yet there was a stillness in Him older than the stones around the well. He breathed in the quiet, and in that quiet He listened to His Father as if nothing in heaven or earth was more natural than a boy kneeling before the day began.

Mary had risen but had not disturbed Him. She moved carefully near the hearth, setting a little grain aside, tending the small fire in a way that made almost no sound. Joseph’s tools lay against the wall, wrapped in their usual order, waiting for the work that would soon fill the morning. A shaved piece of cedar rested near the doorway where Joseph had left it the night before, its clean scent faint in the air. Jesus remained bowed. He prayed for His mother’s strength, for Joseph’s hands, for the village waking under its ordinary troubles, and for one house near the edge of the lane where a lamp had burned too late. Anyone searching for Jesus of Nazareth age 10 story might imagine wonder first, but the wonder of that morning was quiet enough to be missed by anyone in a hurry.

When He rose, He did not speak at once. He stepped outside and looked toward the narrow path where the neighbor’s courtyard opened behind a leaning wall. In that house lived Eliab, a boy nearly twelve, though grief had made him carry himself like someone much older. Eliab’s father had died the year before after a fever that had passed through Nazareth and taken three men before the figs were ripe. Since then, Eliab had learned how to lower his eyes before pity could reach him. He had learned how to answer quickly when adults asked if he was well. He had learned how to be useful so no one would notice how afraid he was. In a quiet companion reflection on young Jesus in Nazareth, the village might have seemed gentle from a distance, but inside its small rooms people still hid the things they did not know how to name.

Joseph came to the doorway with a leather strap in one hand and a patient look on his face. He watched Jesus watching the lane. “You are thinking of Eliab.”

Jesus turned toward him. “His mother’s lamp was still burning when the moon crossed the ridge.”

Joseph’s face softened, though the line between his brows deepened. “Mara is taking in more mending than her eyes can bear. Eliab brought a cracked yoke piece yesterday and asked if I would let him sweep the shop floor to pay for the repair. I told him the repair was small, but he kept standing there as if kindness were a debt he could not carry.”

Mary came near enough to hear. She wiped her hands on her outer garment and looked toward the same lane. “His mother asked for no help when I saw her at the well. That is not the same as needing none.”

Jesus looked down at His hands. They were still the hands of a child, but they had already learned the shape of wood, the weight of water, the roughness of rope, and the tenderness required when touching something cracked. “May I go to him after morning work?”

Joseph nodded slowly. “You may. But do not press where a wound is guarded. Some doors open only when a person is no longer afraid of what will be seen inside.”

Jesus received the words with the seriousness of prayer. Then He helped Mary with the water jar and carried kindling to the hearth before the sun cleared the rooftops. The day began as most days did, not with great events but with the steady demands that keep a household alive. Joseph took Him to the work place beside the house, where the first light reached the shavings on the ground and made them look almost golden. A farmer from Cana had sent word about a plow beam needing attention, and Joseph examined the wood with care, letting Jesus run His fingers along the split.

“Tell me what you feel,” Joseph said.

Jesus touched the grain gently. “It did not break all at once. It was strained for a long time before it opened.”

Joseph looked at Him for a moment, then back at the beam. “Yes. Many things are like that.”

Jesus did not answer, but His eyes went again toward Eliab’s house.

By the time the village fully woke, women were moving toward the well with jars balanced against hip or shoulder, men were calling to animals, and children who had finished early chores slipped into the lanes with the restless energy of those who still believed a day might become play if no adult caught them first. Jesus worked steadily. He brought Joseph the plane, gathered cuttings, held the far end of a board, and listened when Joseph explained how a weakened piece could sometimes be made useful again if joined properly and not forced beyond its strength. He listened with the whole of Himself, though part of His attention remained turned toward the house with the leaning wall.

Eliab appeared just before the sun climbed high enough to warm the stones. He came carrying a basket of wool scraps and torn garments, the load pressed against his chest. His hair was uncombed, and the strap of one sandal had been tied with a strip of cloth. He moved quickly, as if speed could keep him from being stopped. Two other boys, Natan and Joah, trailed behind him with the cruel curiosity of children old enough to know where to wound and young enough to pretend it was nothing.

“Careful,” Natan called. “If you drop those, your mother will have to sew the dirt back together too.”

Joah laughed. “Maybe Eliab can mend his sandal with his father’s old belt. Oh, I forgot. He sold it.”

Eliab’s face hardened, but he did not turn. His hands tightened around the basket until his knuckles whitened. Jesus set down the small wedge of wood He had been smoothing. Joseph had heard, and his jaw set, but he did not move yet. A rebuke from a grown man might silence the boys for a morning and make Eliab pay for it later in whispers. Jesus stepped from the shade of the work area and walked into the lane.

Natan saw Him and shifted his weight. He was older than Jesus by a year and enjoyed the advantage when it was easy. “We were only speaking.”

Jesus looked at him without anger. “Were you speaking to help him carry the basket?”

Natan’s mouth opened, then closed. Joah kicked at a stone.

Jesus went to Eliab and placed His hands beneath one side of the basket. “I can carry it with you.”

Eliab flinched at the offer more than he had flinched at the insult. “I do not need help.”

“I did not say you were weak.”

“I said I do not need help.” Eliab pulled the basket closer, nearly losing hold of it. A torn sleeve fell from the top and landed in the dust.

Jesus bent, picked it up, shook it clean, and laid it back without making the moment larger than Eliab could bear. “Then I will walk beside you.”

That seemed almost worse to Eliab, because help could be refused but presence had nowhere to be sent. He started forward again. Jesus walked at his side, not touching the basket now. Natan and Joah followed for a few steps but lost interest when no anger came to feed them. Joseph returned to the plow beam, though his eyes lingered. Mary watched from the doorway with the look of a mother who understood both boys more than either would have wanted.

The lane narrowed past the well, where several women stood speaking in low voices. As Eliab approached, their conversation changed shape. No one said anything unkind. That was part of the heaviness. Their pity came wrapped in lowered tones and softened faces, and Eliab felt every bit of it like dust clinging to sweat. Mara, his mother, was known to be proud in the way the poor become proud when they are tired of being measured by what is missing. Since her husband’s death, she had accepted grain once from an older cousin and had returned twice its worth in mending. After that, she had found ways to appear grateful without ever appearing in need.

Eliab walked faster. Jesus kept pace.

“You should go back,” Eliab muttered.

“I will, after you reach your house.”

“People will think I asked you.”

“No one has to know what they do not need to know.”

Eliab glanced at Him then, suspicious of gentleness that did not demand recognition. “Why do you care?”

Jesus looked ahead to the leaning wall. “Because you are carrying more than cloth.”

The words struck the place Eliab had sealed shut. His face changed for an instant, not into openness but into fear, as if Jesus had put His hand on a hidden latch. “Do not say things like that.”

“I will not say more now.”

They reached the courtyard. The gate hung unevenly, and the clay jar near the entrance had a crack that had been sealed with pitch. From inside came a cough, then the scrape of a stool. Mara appeared in the doorway with a needle tucked into the cloth at her shoulder and dark circles beneath her eyes. She looked first at Eliab, then at Jesus, and something like embarrassment crossed her face.

“Jesus,” she said, gathering herself quickly. “Peace to you.”

“Peace to your house,” He answered.

Eliab set the basket down too hard. “He followed me. I told him not to.”

Mara’s eyes moved over her son, reading more than his words. “Then thank him, even if you did not ask.”

“I do not need every person in Nazareth thinking we cannot carry our own basket.”

The courtyard grew still. Mara’s face tightened, not with anger first, but with weariness exposed too suddenly. “Go inside and wash your hands.”

“I have work.”

“You have a mouth that is running ahead of wisdom. Go inside.”

Eliab’s shoulders lifted as if he might argue, but he looked at Jesus and seemed ashamed of being witnessed. He snatched the basket again and pushed past his mother into the house.

Mara closed her eyes briefly. “Forgive him. He is not himself.”

Jesus did not look away from the doorway where Eliab had vanished. “He is afraid he is all that is left.”

Mara’s hand went to the doorframe. The words did not offend her. They tired her because they were true. “He heard men talking after the burial. They said a widow’s house is a sinking roof unless a son learns quickly. They did not know he was behind the wall.”

Jesus turned back to her. “Does he think he must become his father?”

“He thinks if he does not, we will lose everything.” Mara tried to smile and could not make it stay. “He was gentle before. He used to sing nonsense to the goats and make his little sister laugh until she spilled water. Now if she laughs too loudly, he tells her to save her strength. He counts the grain. He watches my hands. He wakes when I cough. Sometimes I find him sitting by the tools as if staring at them long enough will teach him how to be a man.”

“Where is his sister?”

“With my sister today. I had too much work to keep her from pulling the thread.”

Inside the house, something wooden struck the floor. Mara winced but did not turn. Pride fought concern on her face and lost. “I should go in.”

Jesus stepped back. “I will tell my mother you may need oil for the lamp.”

Mara’s eyes sharpened. “I did not ask for oil.”

“No,” Jesus said gently. “You stayed awake without it.”

For a moment, Mara looked as if she might refuse the kindness before it had even been offered. Then her shoulders lowered, just a little. “Tell your mother nothing that will make the women speak.”

“I will tell her only what love needs to know.”

Mara’s mouth trembled. She pressed her lips together and nodded once, then went inside.

Jesus returned through the lane slowly. The village had become bright now, full of sound and sun, but the morning’s prayer had not left Him. He passed the well where the women still talked. One of them, an older woman named Tirzah, touched His shoulder lightly.

“You were at Mara’s house?”

“Yes.”

“She will not accept help.”

Jesus looked up at her. “Then perhaps help should arrive without shaming her.”

Tirzah sighed. “That is more difficult.”

“Yes,” Jesus said.

The woman studied Him, then gave a small, almost puzzled smile. “You speak like your mother when she is quiet and like Joseph when he has decided not to be hurried.”

Jesus received that without pride and continued home.

By noon, the heat pressed down on the roofs, and work slowed. Joseph set aside the plow beam and shared bread with Jesus in the shade. Mary had sent a small bowl of olives and a piece of goat cheese, and they ate simply. Jesus asked whether there was extra oil in the house. Joseph looked toward Him with the attention he gave to wood before deciding where to cut.

“For Mara?”

Jesus nodded.

Joseph broke his bread in two and gave Jesus the larger piece without comment. “There is some. Not much.”

“Enough for tonight?”

“Enough for tonight.”

Jesus looked at the bread in His hand. “She will not want to receive it.”

“No,” Joseph said. “Sometimes people who have been wounded by loss feel wounded again by kindness. It tells them the loss is visible.”

Jesus sat with that. Across the lane, a little girl ran after a chicken and laughed. The sound floated briefly and then disappeared behind a wall. Jesus thought of Eliab telling laughter to save its strength.

“Can a boy become hard because he is trying to be faithful?” Jesus asked.

Joseph wiped his hands and leaned back against the wall. “Yes. A man can too.”

“Then someone must show him that faithfulness is not the same as fear.”

Joseph’s eyes rested on Jesus for a long while. “And how will you show him?”

Jesus looked toward Eliab’s house. “By staying near enough for him to become angry at mercy.”

Joseph did not smile, though tenderness moved across his face. “That may be costly.”

Jesus lowered His eyes. “Mercy often is.”

The afternoon brought the first consequence. Eliab came running to Joseph’s work area with his face pale and his breathing broken. Sweat darkened his tunic at the neck. He stopped at the edge of the shade as if the threshold itself accused him.

“The shelf,” he said.

Joseph rose at once. “What shelf?”

“My father’s shelf. In the house. It came loose. The jars fell.” His voice tightened. “One broke. The oil.”

Jesus stood too, already understanding more than the words carried. “Was anyone hurt?”

“No.” Eliab swallowed. “My mother tried to catch it, but it struck her wrist. She says it is nothing. It is not nothing.”

Joseph reached for his tool bundle. “I will come.”

Eliab’s eyes filled with panic. “We cannot pay.”

“I did not ask.”

“I can work. I can sweep. I can carry wood. I can—”

“Eliab,” Joseph said, firm but not harsh, “a loose shelf does not wait until a house can afford it.”

The boy looked cornered by mercy again. His gaze shifted to Jesus, and something wounded in him turned sharp. “You told them.”

Jesus met his anger quietly. “I told no one about the shelf.”

“You told them we needed oil.”

“I asked because your lamp had burned late.”

“You saw too much.”

Jesus did not deny it. “I saw a light in a tired house.”

Eliab’s eyes flashed. “You think that makes you good?”

Joseph paused, tool bundle in hand. The lane seemed to quiet around them. Jesus stood very still, not offended, not retreating. “No.”

The answer was so simple that Eliab seemed not to know where to put his anger. His mouth twisted, and for a moment he looked younger than twelve, younger than grief had allowed him to be. Then he turned and hurried back toward his house, leaving Joseph and Jesus to follow.

Inside Mara’s home, the shelf had pulled from the wall where old clay had crumbled around the wooden peg. Two jars lay cracked on the floor, and a dark stain of oil had spread into the dust. Mara sat near the hearth with her wrist held against her chest, refusing to weep from pain or humiliation. The room smelled of spilled oil, broken clay, and the faint sourness of fear. Eliab stood in the corner, rigid, watching Joseph examine the wall. He looked as if every crack in the house were a charge against him.

Joseph worked without ceremony. He did not speak of payment. He did not make pity visible. He asked Jesus for the smaller peg, then the mallet, then a strip of leather to brace the weakened place. Jesus moved quietly, each action careful, while Mara’s eyes followed Him. Eliab did not help at first. He hovered, tense with the uselessness he hated.

At last Joseph said, “Eliab, hold this steady.”

The boy stepped forward too quickly, eager for a task that sounded like proof. He gripped the shelf while Joseph reset the support. Jesus stood on the other side, holding the brace. Their hands came close but did not touch. Eliab stared at the wood.

“My father made this,” he said, almost too low to hear.

Joseph did not stop working. “Then we will honor his work by strengthening it.”

“It failed.”

“It carried weight for many years.”

“It failed when we needed it.”

Joseph’s hands slowed. Jesus looked at Eliab, but the boy kept his eyes on the shelf. The words were not about wood. Everyone in the room knew it, and because they knew it, no one rushed to answer.

Mara made a small sound. “Eliab.”

He shook his head. His grip tightened. “It failed.”

Jesus spoke softly. “Your father did not fail because he died.”

Eliab’s face went white. “Do not speak of him.”

Jesus did not move closer. “You are trying to hold up what only God can hold.”

“Be quiet.”

Joseph’s eyes flicked toward Jesus, not warning Him away, but weighing the moment. Mara covered her mouth. The repaired shelf creaked as Eliab’s hands trembled against it.

Jesus continued, His voice still gentle. “You loved him. You miss him. You are angry that he is gone. And you are afraid that if you stop being strong, your house will fall too.”

Eliab released the shelf as if burned. The wood shifted, and Joseph caught it with one hand. “I said be quiet!”

The shout filled the little room. Outside, a goat bleated, absurdly ordinary. Mara began to stand, but pain pulled her back. Eliab looked at his mother, then at Joseph, then at Jesus, and shame rushed in after anger. He backed toward the doorway.

“I will get more clay,” he muttered.

Joseph said his name, but Eliab was already gone.

For a few breaths, no one spoke. Jesus looked toward the open door, where sunlight lay across the threshold. He had not resolved the wound. He had opened it. That was different, and it hurt more.

Mara’s eyes shone with tears she would not let fall. “He has not said he misses him. Not once.”

Jesus bent and picked up a broken piece of the oil jar. He held it carefully so the sharp edge would not cut Him. “He thinks missing him will make him less able to protect you.”

Mara looked at her wrapped wrist. “And I have let him think it, because I was afraid if he became a child again, I would have no one standing beside me.”

Joseph lowered the shelf into place and set the brace. His voice was quiet. “A child should not have to become a husband to his mother.”

Mara’s face broke then, not loudly, but in a way that seemed to take strength from her whole body. She turned aside, pressing her injured wrist against her chest, and wept without covering it quickly enough. Jesus did not stare at her tears. He set the broken clay aside and waited as if sorrow were not something shameful that needed to be hidden before God could enter the room.

When the shelf was secure, Joseph gathered the pieces of broken jar and carried them out. Jesus remained long enough to sweep the spilled dust and oil into a small dark mound near the doorway. Mara watched Him, breathing unevenly.

“Will he hate You for saying it?” she asked.

Jesus looked toward the lane where Eliab had disappeared. “He may hate being seen before he is ready to be healed.”

“And what do we do with that?”

Jesus lifted the broom and rested it against the wall. “We do not stop loving him when he makes love difficult.”

Mara closed her eyes. The words landed in the room with a weight that did not crush. They simply stayed.

When Jesus stepped back into the sunlight, Eliab was nowhere near the clay pit or the lane. Jesus looked toward the lower terraces where boys sometimes went when they did not want to be found. Joseph came beside Him, carrying the tool bundle.

“Will you go after him?” Joseph asked.

Jesus watched the heat tremble above the stones. “Not yet.”

Joseph nodded. “Why?”

“Because he ran from truth, not from danger. If I follow too soon, he will only run farther.”

They walked home together. The village carried on around them. Someone bargained over figs. A woman scolded a child for splashing at the well. A donkey refused to move until its owner gave up shouting and pulled with both hands. Life did not pause because one boy had been exposed in his grief. That was part of the sorrow of the world. People could break inside while the market still opened, the bread still baked, and neighbors still asked ordinary questions.

Jesus returned to Joseph’s work area, but the day had changed. The repaired plow beam waited, yet His thoughts stayed with Eliab. He could still see the boy’s hands trembling against the shelf, still hear the anger that had risen because sadness had no other door. Mary brought water in the late afternoon, and Jesus drank. She touched His hair lightly, smoothing back dust.

“You spoke truth today,” she said.

Jesus looked up at her. “It hurt him.”

“Yes.”

“He was already hurting.”

“Yes.”

Jesus held the cup with both hands. “Truth can feel like another wound when someone has been hiding the first one.”

Mary sat beside Him in the shade. “And yet hidden wounds do not become clean by staying hidden.”

He looked toward the hills. The sun had begun its slow descent, softening the village edges. Somewhere below, Eliab was alone with the thing he had been trying not to know. Jesus knew He would see him again before night. He also knew the next meeting would not be easier.

As evening approached, Joseph sent Jesus to return a borrowed awl to a man whose courtyard looked over the lower path. Jesus took the tool and went alone, not because He needed to pass near the terraces, but because obedience often moved through ordinary errands. He returned the awl, accepted the man’s blessing, and began the walk back as shadows gathered. Near the low stone wall beyond the last houses, He heard the sound of someone striking rock with a stick.

Eliab stood among the scrub and stones, hitting the ground again and again until the stick splintered. His face was wet, though whether from sweat or tears was hard to tell in the fading light. He saw Jesus and froze.

“Go away,” he said.

Jesus stopped several paces off. “I am on the path home.”

“Then keep walking.”

Jesus looked at the broken stick in Eliab’s hand. “Does the ground answer when you strike it?”

Eliab’s jaw clenched. “You think everything you say means something.”

“No.”

“You said I was afraid.”

“You are.”

Eliab lifted the stick as if he might throw it, then lowered it. “My father said he would teach me how to fit a door before winter. He said I was old enough to learn properly. Then he became hot with fever, and men carried him out, and everyone kept saying God is merciful.” His voice cracked on the last word. “What does that mean when the door still does not fit and my mother sits awake because there is not enough oil?”

Jesus listened. The wind moved lightly through the dry grass. From the village, faint voices carried upward, blurred by distance.

Eliab wiped his face angrily. “If I miss him, I cannot work. If I cry, my mother will cry. If I am a child, we will starve. So tell me, Jesus. What am I supposed to do with all that?”

Jesus did not answer quickly. He stepped closer, only one pace. “Bring it to God without pretending it is smaller.”

Eliab gave a bitter laugh. “That is what people say when they have bread.”

Jesus received the accusation without defense. “Then say that to Him too.”

The boy stared at Him.

Jesus’s voice remained quiet. “If you think God has been hard with you, do not speak to Him as if you think He has been gentle. If you are angry, do not dress anger like obedience. If you are afraid, do not call fear responsibility. He already knows what is true. Prayer is not where you hide from Him. It is where you stop hiding.”

Eliab’s breathing changed. The stick slipped from his hand. “I do not know how.”

Jesus looked toward the village, where lamps were beginning to appear one by one. “Then begin with what you just said.”

“I cannot.”

“Not yet,” Jesus said. “But you will have to choose. You can keep trying to become your father, or you can let God be Father to you in the place where yours is gone.”

Eliab turned away, but not before Jesus saw the tears come fully. The boy covered his face with both hands and bent forward under the force of what he had held back for months. Jesus did not touch him. Not yet. He stood near in the falling light while Eliab wept like someone who had finally stopped guarding a door that had already been broken.

The village lamps brightened. Somewhere in Nazareth, Mara waited with an injured wrist and a repaired shelf. Joseph’s work lay unfinished for morning. Mary tended the hearth. The day that began in prayer had led to a boy standing among stones with grief uncovered, and still nothing was fixed completely. That was how mercy often began. Not with everything healed, but with the first honest sound after a long silence.

Chapter Two

Eliab did not go home quickly after the tears stopped. He stood with his back to Jesus and wiped his face on his sleeve until the cloth was damp and streaked with dust. The sky had deepened into evening, and the first coolness came down from the hills, touching the stones that had spent the day holding heat. He looked embarrassed by the silence more than by the weeping itself, as if silence gave memory too much room to speak.

Jesus waited on the path, close enough to remain with him and far enough not to trap him. The village below them had begun to gather into night. Lamps burned behind small openings. Smoke rose from roofs. Voices softened as families came inside. Nazareth looked peaceful from where they stood, but Eliab knew what waited beneath that peace. His mother would ask where he had gone. His sister would ask why his eyes were red. The shelf would be repaired, but the oil was gone. The house would still be the house where his father did not sit.

“You should not tell anyone,” Eliab said at last.

“I will not tell what is yours to speak.”

Eliab turned enough to look at Him. “People already talk.”

“Yes.”

“They say things even when they pretend not to.”

“Yes.”

“They look at my mother as if she is a cracked jar.”

Jesus looked toward the village. “Some people do not know how to see pain without making the person feel smaller.”

Eliab pressed his lips together, because that was exactly what he hated and could not have said so clearly. “I hate it when they bring bread.”

“Because you need it?”

“Because they know we need it.”

Jesus nodded. “That can feel heavy.”

“It is heavy.” Eliab kicked a loose stone, sending it down the slope until it struck the wall below. “If my father were here, no one would bring bread.”

Jesus did not argue, though both of them knew there were houses with fathers where hunger still entered. This was not the moment to correct every thought. Some words were not meant to be measured first. They were meant to be brought out of the dark where they had grown too large.

After a while, Eliab said, “I do not want my mother to see me like this.”

“She may need to.”

“No.” The answer came quickly, with fear under it. “She has enough.”

Jesus turned toward him. “Does she have you?”

Eliab frowned. “What does that mean?”

“Does she have her son, or does she only have the guard you have placed at the door?”

The boy looked away. His face tightened again, not with anger this time, but with the strain of understanding something before he was ready to accept it. “If I stop, everything falls.”

“You are not holding everything.”

“You do not know that.”

Jesus’s eyes were steady. “I know you are tired.”

Eliab swallowed hard. The truth had become less like a blade and more like a hand resting on his shoulder, which made it harder to fight. He bent, picked up the broken stick, and turned it over in his hands. “I should go.”

“I will walk behind you.”

“Why behind?”

“So you do not have to feel watched.”

Eliab gave Him a suspicious glance, but he did not refuse. They descended toward the village with several paces between them. Jesus moved quietly. Eliab walked faster when they passed houses where voices could be heard. At his own courtyard, he stopped so suddenly that Jesus stopped too. The doorway glowed with low lamplight. Mara’s shadow moved across the wall inside. A smaller shadow moved after hers, quick and uneven, and Jesus knew Eliab’s little sister had returned.

Eliab did not enter.

From inside, the girl’s voice rose. “Mother, will Eliab come back before the bread is gone?”

Mara answered with forced calm. “He will come.”

“He is always angry.”

“He is not always angry.”

“He told me not to sing.”

Mara’s answer came late. “Then sing quietly tonight.”

Eliab’s face changed as if the words had struck him harder than Natan’s mockery. He had not known his anger had entered his sister’s little joys and told them where to sit. He stared at the doorway with shame gathering under his eyes.

Jesus did not speak. He simply stood behind him on the path.

Eliab stepped inside. Jesus remained outside the courtyard wall, unseen by Mara at first. He heard the small shift in the room when Eliab entered, the way silence can announce a person more loudly than a greeting.

“Where were you?” Mara asked.

Eliab’s voice was low. “By the lower stones.”

“You frightened me.”

“I know.”

His sister, Dalia, spoke with the bluntness of six years. “Your face is dirty.”

Eliab did not snap at her. “I know.”

Mara must have seen more then, because her voice softened. “Come here.”

“I am fine.”

“No,” she said, and there was a tremble in the word that did not weaken it. “Come here as my son, not as the man of the house.”

Eliab did not move. Jesus could not see him, but He could feel the resistance in the pause. The boy’s whole false life stood at that threshold. To cross it would mean letting his mother see the child grief had buried alive. To refuse would mean keeping the house in its cold order, where no one sang loudly and no one cried safely.

“I do not know how,” Eliab said.

Mara wept then, not much, but enough that Dalia became quiet. “Neither do I.”

That honesty entered the house like fresh air through a room closed too long. Eliab made a sound that was almost a breath and almost a sob. Jesus heard the scrape of a stool, then the soft collapse of someone kneeling. Mara whispered her son’s name. Dalia asked if she should bring water, and for the first time in many months, Eliab laughed through tears. It was brief and broken and full of pain, but it was laughter.

Jesus turned to go. He did not need to be seen in that moment. Mercy had reached the door, and the door had opened from the inside.

When He returned home, Mary was waiting with a bowl covered by cloth. She did not ask many questions. She looked at His face and understood enough. Joseph sat near the doorway repairing a strap by lamplight. He glanced up, then toward the bowl.

“Your mother kept food warm.”

Jesus washed His hands and sat with them. The house smelled of lentils and bread, and the quiet there was different from the quiet in Mara’s house. It held trust. Jesus ate slowly. Mary watched Him in the way mothers watch children who carry more than the day should have given them.

“Did he go home?” she asked.

“Yes.”

“Did he speak?”

“Yes.”

Joseph tied off the strap and set it aside. “Then the first gate has opened.”

Jesus looked at the small flame between them. “The gate is open, but the road is still hard.”

Joseph nodded. “A boy can tell the truth in the evening and still be afraid by morning.”

That proved true before the sun had risen fully the next day. Eliab came to Joseph’s work area carrying two of his father’s tools wrapped in cloth. His eyes were swollen, but his jaw had returned to its stubborn line. He waited until Joseph looked up, then laid the bundle down as if he were placing an offering on an altar.

“I want you to teach me to use these,” he said.

Joseph wiped sawdust from his hands. “Good tools.”

“They were my father’s.”

“I know.”

“I need work.”

Joseph did not touch the bundle yet. “You need to learn.”

“I can learn while working.”

“You can learn by watching first.”

Eliab’s mouth tightened. “Watching does not buy oil.”

Jesus, who had been smoothing a small peg, looked up. The words were honest, but fear had dressed itself in responsibility again. It had only changed garments overnight.

Joseph unfolded the cloth. Inside lay a chisel with a worn handle and a small adze, both cared for but unused since Eliab’s father died. Joseph lifted the chisel and tested its edge. “This needs sharpening.”

“I can sharpen it.”

“Have you sharpened one before?”

“I have seen him do it.”

Joseph set the chisel down carefully. “Seeing is not the same as knowing.”

Eliab’s face burned. “Then teach me quickly.”

“There are things that cannot be taught quickly without harming the learner or the work.”

“I do not have time to be a child.” The words came out louder than he intended. A passerby looked over, and Eliab’s shoulders stiffened.

Jesus rose and came near, but He did not enter the conversation too quickly. Joseph folded the cloth back over the tools, not dismissing them, simply covering them from the dust.

“Eliab,” Joseph said, “I can teach you. I will not make you into your father by sundown. I will not pretend you can carry a man’s trade before your hands are ready. That would not honor him.”

“My mother needs coin.”

“Your mother needs a son who does not cut his hand open trying to prove he is a man.”

Eliab looked away.

Joseph’s voice softened. “Come three mornings each week after your first chores. Sweep, carry, watch, ask. When your hands are ready, you will shape small pieces. When those are true, you will shape larger ones. If work comes that can be paid fairly to a learner, I will tell you. But I will not put fear in charge of the tools.”

The offer was kind, measured, and humiliating to the part of Eliab that wanted urgency to become adulthood. He looked at Jesus as if expecting Him to add something. Jesus only said, “A tool obeys the hand that has learned patience.”

Eliab almost smiled despite himself, but pride caught it. “Everything with You becomes patience.”

“Not everything,” Jesus said. “Some things become surrender.”

The word unsettled him more than he wanted to show. He snatched up the covered tools. “I will come tomorrow.”

Joseph nodded. “Come before the heat.”

Eliab left, and for a little while hope seemed possible. But rising truth often awakens the very fear it threatens, and by midmorning Eliab’s fear had found another road. Mara sent him to the well with a jar that had survived the broken shelf. Dalia followed, singing under her breath because the night before had given her permission again. Eliab let her sing until they neared the well and saw Natan and Joah sitting on the low stones with two other boys. Then he turned sharply.

“Stop.”

Dalia’s song died. “Why?”

“Just stop.”

She hugged the small cup she carried. “Mother said I could sing.”

“Not here.”

The boys noticed them. Natan leaned back, grinning. “Eliab, did you cry so loudly last night that the dogs hid?”

Joah laughed. “Maybe Jesus taught him to weep properly.”

Eliab’s hand tightened on the jar rope. Dalia looked from the boys to her brother, frightened by the change in him. At the edge of the well path, Jesus had come with Mary to draw water, though He remained a little behind her with another jar. He saw the moment take shape before anyone else did. Eliab had been seen in weakness, and now shame demanded payment from someone smaller.

Natan hopped down from the stone. “Careful with that jar. If you break another one, your mother will have to borrow from every house in Nazareth.”

Eliab stepped forward. “Say her name again.”

Natan’s grin faltered, then returned because the other boys were watching. “Mara, Mara, Mara.”

Eliab swung the jar. He did not mean to strike Natan’s head, but anger rarely asks where it will land once it is allowed to lead. The jar clipped Natan’s shoulder and flew from Eliab’s hand, breaking against the stones near the well. Water spread across the dust. Dalia screamed. Natan stumbled back, more shocked than injured, and Joah shouted for his mother. The women at the well turned all at once. Mary moved quickly to Dalia and drew her aside. Jesus stepped between Eliab and the boys before another blow could come.

The scene hardened around Eliab. Every face looked at him. Every whisper he hated had become possible now. Natan rubbed his shoulder, red-eyed with embarrassment. “He hit me.”

“You mocked his mother,” one woman said, but her correction came too late to undo the broken jar.

Eliab stared at the shards as if he had awakened and found his own hands guilty. The jar had been one of the last good ones. He looked at Dalia, whose small mouth trembled. He looked at Jesus and saw no accusation there, which somehow made it worse.

“I did not mean to break it,” he said.

Natan, recovering his pride, spat toward the ground. “Widow’s son cannot even carry water.”

Eliab lunged again, but Jesus caught his wrist. He did not grip hard, yet Eliab could not pull free. The strength in that small hand startled him. Jesus looked into his face with a sadness that held him more firmly than fingers.

“If fear leads you,” Jesus said, “it will make you harm the people you are trying to protect.”

Eliab’s breath shook. “Let me go.”

“I will. But not into more sin.”

The word pierced him. Sin was not a word adults used for grief, and Eliab wanted grief to excuse everything that followed it. He pulled back, and Jesus released him. The boy stood surrounded by water, shards, staring villagers, and the crying sister he had silenced once again.

Mary knelt and began gathering the larger pieces so no child would cut a foot. Dalia clung to her. Natan’s mother arrived in a rush, full of anger and worry. She examined her son’s shoulder, then rounded on Eliab.

“Has your house lost all discipline?”

Mara arrived moments later, drawn by the shouting. Her injured wrist was wrapped, and her face showed that she had come too fast. She saw the broken jar, Dalia crying against Mary, Natan’s mother furious, and Eliab standing in the middle of it all with shame closing around him.

“What happened?” Mara asked.

No one answered gently. Several spoke at once. The story became tangled with accusation, defense, pity, and judgment. Mara’s face went pale. Eliab stared at the ground and did not defend himself. That silence, which once had looked like strength to him, now looked like cowardice.

Jesus stepped beside him. “Natan mocked Mara. Eliab struck with the jar and broke it. Natan was not badly hurt, but Eliab did wrong.”

The clear truth quieted the crowd because it belonged to no side. Natan’s mother drew herself up, still angry but robbed of exaggeration. Mara closed her eyes. Eliab looked at Jesus with betrayal in his face.

“You told against me.”

Jesus looked at him steadily. “I told the truth for you before shame could make it crooked.”

Eliab’s lips parted, but no words came.

Mara turned to Natan’s mother. “I am sorry for my son’s hand against yours. We will make right what we can.”

Natan’s mother looked toward the broken jar and then back at Mara’s wrapped wrist. Her anger shifted uneasily under the eyes of the women. “See that he keeps away from my boy.”

Mara nodded. Then she turned to Eliab. “Pick up what broke.”

The command was simple, and he obeyed because there was nothing else left. He knelt among the shards, careful now, slow now, feeling each piece of clay as evidence. Dalia watched him from Mary’s side. When he reached for one sharp fragment, Jesus bent and moved his hand away.

“That one will cut you.”

Eliab whispered, “Good.”

Jesus’s face changed, not with shock, but with deeper grief. “No.”

The single word was quiet enough that no one else seemed to hear it. Eliab did. It entered the place where punishment had started to look like relief.

Jesus picked up the sharp piece Himself and placed it with the others. “You cannot pay for wrong by wounding what God made.”

Eliab’s eyes filled again, but this time he fought the tears because the village was watching. “Then what do I do?”

Jesus looked at Dalia, then at Mara, then at Natan standing behind his mother. “You begin by saying the truth without hiding behind what was done to you.”

Eliab’s throat worked. He looked at Natan, and anger still moved there. He looked at his mother and saw exhaustion. He looked at his sister and saw fear of him, which hurt most of all.

“I struck him,” Eliab said, barely audible.

Mara’s voice came softly. “Louder, son.”

He swallowed. “I struck him. I broke the jar. I frightened Dalia.” He looked at Natan’s mother, though the words seemed to cost him more than lifting any load. “I was wrong.”